The landscape of modern artificial intelligence is undergoing a fundamental architectural shift, moving from the raw power of massive GPU clusters toward the specialized efficiency of high-performance CPUs. This transition is nowhere more evident than in Meta's recent landmark agreement to secure millions of Amazon AI CPUs. By integrating AWS Graviton chips into its infrastructure, the social media giant is pivoting its focus toward the burgeoning realm of inference and agentic workflows.

The Rise of Agentic Computing and Amazon AI CPUs

The distinction between training a model and running it is becoming the defining boundary of silicon architecture. For years, the industry spotlight remained fixed on GPUs, which are essential for the heavy lifting required to train large language models (LLMs) from scratch. However, once those models are operational, the workload changes significantly.

The industry is now pivoting toward AI agents—autonomous systems capable of real-time reasoning, complex code generation, and multi-step task coordination. These agentic workloads do not always require the massive parallel processing power of a GPU; instead, they demand high-efficiency, low-latency performance found in advanced CPUs.

Leveraging ARM Architecture

Amazon’s Graviton series, built on ARM architecture, is specifically engineered to handle these compute-intensive tasks. By deploying millions of these Amazon AI CPUs, Meta is signaling that the next stage of AI development will be defined not just by how large a model can be trained, but by how efficiently it can act as an intelligent, reasoning entity in real-time environments.

Reshaping Cloud Rivalries and Infrastructure

This massive hardware deployment represents more than just a technical upgrade; it is a significant geopolitical shift in the cloud computing landscape. For much of the recent past, Meta has been diversifying its infrastructure spend, including a massive six-year, $10 billion deal with Google Cloud.

This new move toward AWS suggests that Amazon is successfully reclaiming a central role in Meta's long-term AI strategy. The timing of the announcement also highlights the intense competition between the "Big Three" cloud providers, arriving just as the Google Cloud Next conference concluded. This deal serves as a powerful rebuttal to Google’s own custom silicon advancements.

The current landscape of AI-specialized silicon is becoming increasingly fragmented:

- AWS Graviton: An ARM-based CPU optimized for high-efficiency AI agent workloads and inference.

- AWS Trainium: Amazon's specialized hardware designed to bridge the gap between training and active processing.

- Nvidia Vera: A looming competitor in the ARM-based CPU space, designed specifically for agentic tasks.

- Google Custom Silicon: Proprietary chips used by Google to maintain its edge in large-scale model training.

The Battle for Price-Performance

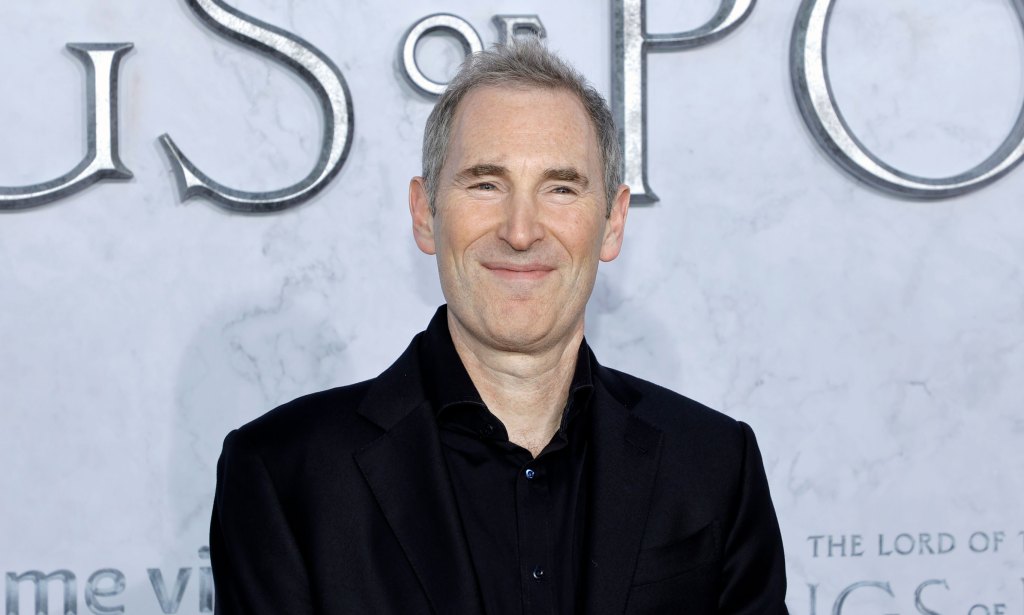

The hardware war is no longer just about who has the fastest chip, but who offers the best price-performance ratio. Amazon CEO Andy Jassy has been explicit in his mission to win enterprise deals by focusing on this metric. This strategy is particularly relevant as the cost of running AI at scale threatens to become unsustainable for even the largest tech conglomerates.

While Amazon’s Trainium chips are already being heavily utilized by companies like Anthropic, the Graviton deal provides a different kind of victory for AWS. It demonstrates that the cloud provider can offer a tiered approach to AI: using Trainium for heavy-duty training and these Amazon AI CPUs for the ubiquitous, high-frequency tasks of reasoning and execution.

As Meta scales its agentic capabilities, the pressure on Amazon's internal silicon teams will only intensify. They must now prove that their custom architecture can support the world's most sophisticated AI ecosystems without a reliance on traditional, high-cost GPU architectures. The future of the AI era may well be written in ARM instructions rather than raw CUDA cores.