Meta is implementing a controversial new strategy to bolster platform safety by leveraging advanced machine learning. The company has deployed a new AI system designed to detect whether users are under the age of 13 by analyzing specific visual cues in photos and videos. By scanning for biological markers like height estimation and bone density indicators, Meta aims to proactively identify underage accounts across Facebook and Instagram.

How Meta Uses AI to Analyze Age

The technical architecture behind this initiative relies on sophisticated computer vision models trained on millions of annotated images. Rather than using traditional facial recognition to identify specific individuals, the system focuses on biometric proxies to infer age-related metrics indirectly.

Key components of this visual analysis include:

- Height Estimation: Utilizing 3D depth mapping in AR environments or silhouette analysis against known reference objects.

- Skeletal Structure Patterns: Assessing bone density indicators inferred from posture, movement, and how users interact within virtual spaces.

Meta maintains that these methods are non-intrusive, claiming that no facial features are extracted or stored in a way that violates GDPR guidelines. However, the reliability of using height and bone structure to determine age remains a point of contention among experts, as clothing, footwear, and posture can easily skew results.

Regulatory Pressure and Legal Risks

This move toward AI-driven age verification follows intense global scrutiny regarding how Meta handles minor safety. The company is currently facing significant legal hurdles, including a recent $375 million penalty ordered by a New Mexico jury for misleading consumers about platform safety.

As child safety lawsuits surge globally, Meta’s shift toward proactive detection is an attempt to mitigate risk. However, the implementation of such technology presents new ethical dilemmas:

- False Positives: Incorrectly flagging users could lead to unwarranted account deactivations.

- Disproportionate Impact: Marginalized communities may be unfairly targeted if they lack easy access to official verification documents like birth certificates.

- Surveillance Concerns: Privacy advocates warn that analyzing physical traits could normalize the mass surveillance of minors.

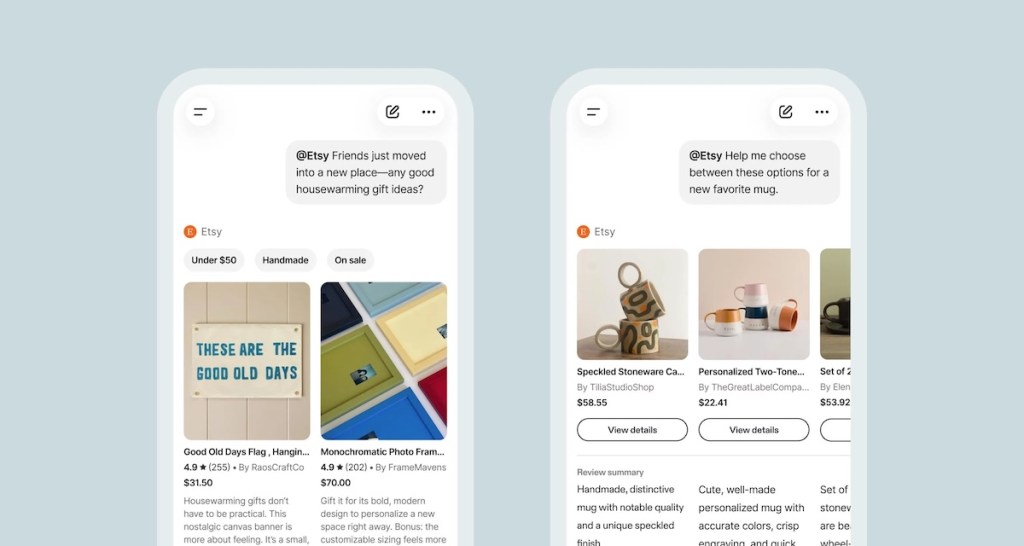

Rolling Out Facebook and Instagram Teen Accounts

The deployment of these detection tools is beginning in specific regions, including Brazil and EU member states, with a U.S. rollout expected for Facebook’s new “Teen Accounts.” These accounts are designed with stricter default settings to protect younger users:

- Private Messaging: Restricted only to approved contacts.

- Comment Suppression: Automated filtering of harmful comments.

- Limited Visibility: Reduced public profile presence.

Users flagged as potentially underage by the AI system will face mandatory age verification. This process mirrors "Know Your Customer" (KYC) standards used in the finance industry, requiring users to provide ID scans or third-party certifications before they can reactivate their accounts.

As Meta pivots toward proactive risk mitigation, the tech industry is watching closely. The success of this initiative depends on whether Meta can balance effective safety enforcement with the need to maintain user trust and avoid the pitfalls of automated, probabilistic analysis.