Much of the die space of modern GPUs is given over to functionality that's not part of the traditional rendering pipeline. Sometimes, that feels like a bit of a waste. Which is why Microsoft's new Shader Model 6.10 preview, announced yesterday, could be mostly good news. It's making matrix math part of the official DirectX API suite. And that means neural rendering in all its various, magical and worrisome forms will be a core part of DirectX.

There are several elements to Microsoft's new Shader Model 6.10 package, including changes to shared shader memory management and raytracing tweaks. But it's arguably the "Matrix" feature that's most interesting.

According to Microsoft, it "unlocks comprehensive hardware acceleration for Matrix-oriented operations across various use cases." While the DirectX API did previously have narrow support for matrix math units, this update promises to make matrix math, and in turn various neural rendering technologies, generic to all GPUs that comply with the new DirectX standard, as opposed to a proprietary feature that game developers need to support for specific graphics card families.

In really simple terms, matrix math is the computational paradigm used by modern AI systems, most notably transformer models, of which most LLMs or large language models are, more or less, a functional subset.

Nvidia GPUs have had matrix math hardware since the RTX 20 Series, known as Tensor cores. AMD was slower to adopt specific hardware for matrix math in its GPUs, with the RDNA 3 generation having some optimisations in its shader cores for supporting matrix math before RDNA 4, otherwise known as the Radeon RX 9000 family, more formally added designated hardware akin to Nvidia's Tensor cores.

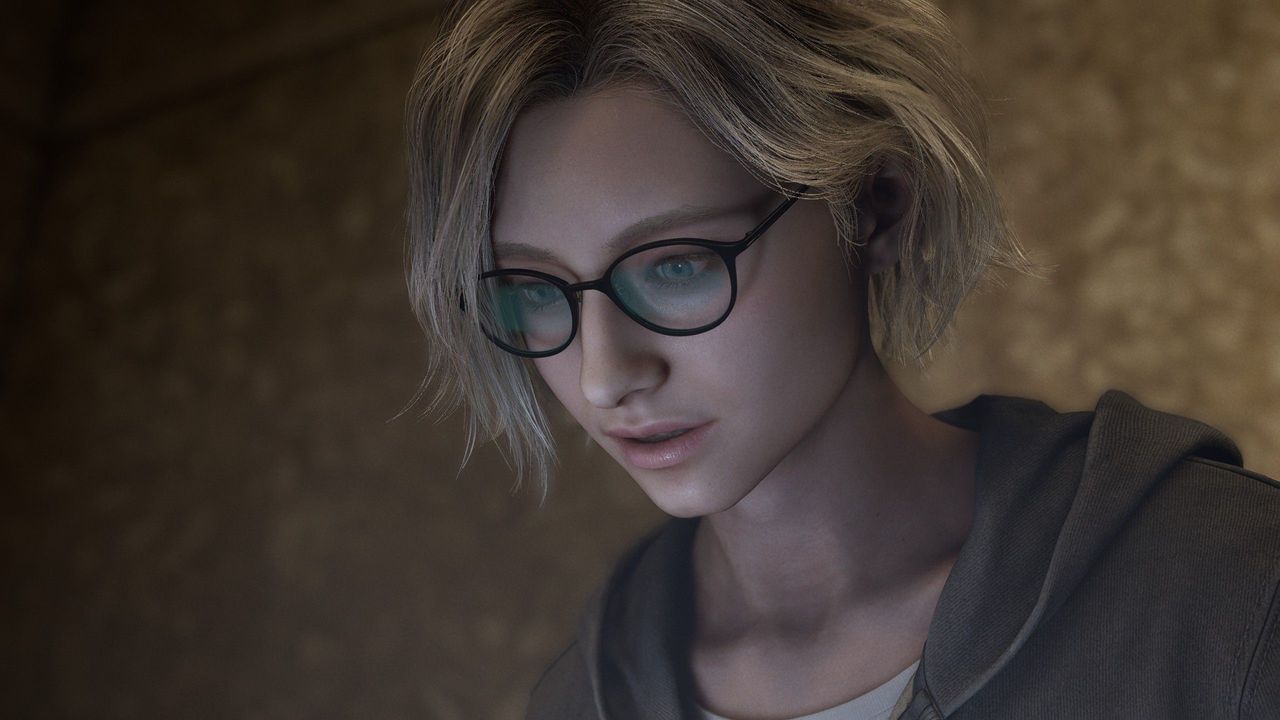

If you're not a fan of DLSS 5, Shader Model 6.10 could actually be a good thing. (Image credit: Nvidia)The bottom line here is that Shader Model 6.10 promises to make support for hardware matrix math an essential part of DirectX compliance. That means, in theory, you could have various neural rendering game features written once for DirectX and work on all compliant hardware, instead of having to create code for specific GPUs.

Just note that all this applies essentially to GPUs that comply with the new Microsoft API. Older AMD GPUs without matrix math hardware almost certainly won't qualify. Still, that could mean, for instance, generic rather than GPU-specific upscaling. Beyond upscaling, Nvidia has mooted a wide range of so-called "neural rendering" technologies that all depend on matrix math to some degree.

That includes RTX Neural Shaders, which can generate textures, materials, lighting, and volumes; RTX Neural Radiance Cache, a technology for improving lighting using AI; RTX Mega Geometry for path-tracing massive worlds of dense geometry, and more.

While those are Nvidia-specific examples, they give an idea of the scope of possibilities for so-called neural rendering and a sense of the sorts of rendering features that could be standardised or at least supported generically by future DirectX-compliant GPUs.

That's definitely good news, because it seems likely that future graphics chips will have ever greater proportions of their dies given over to running matrix math. So, generic support via DirectX should mean broader uptake by game devs who no longer have to worry about creating code paths for specific GPUs or GPU families.

And that should mean more advanced features, more extensive and efficient usage of available GPU hardware and, well, better-looking games. And even if you're worried by the likes of DLSS 5 and the insertion of AI slop into games, Shader Model 6.10 should still be a good thing because, in theory at least, it makes neural rendering less of an Nvidia-specific technology.