How Nvidia Leans on AI to Redesign Its Next-Generation GPUs

For more than 16 years, Nvidia's annual GTC event has been packed with talks about using GPUs beyond just rendering 3D graphics. This year's conference maintained that tradition but offered a rare peek behind the curtain at how the company designs its chips. AI is naturally a massive part of this internal evolution, transforming everything from process node transitions to fundamental logic gate placements. Bill Dally, Nvidia's chief scientist, revealed these insights while discussing "advancing to AI's next frontier" with Google's Jeff Dean. The revelation that an AI agent now handles the complex task of switching between semiconductor process nodes is particularly striking for the industry.

Accelerating Process Node Transitions with NVCell

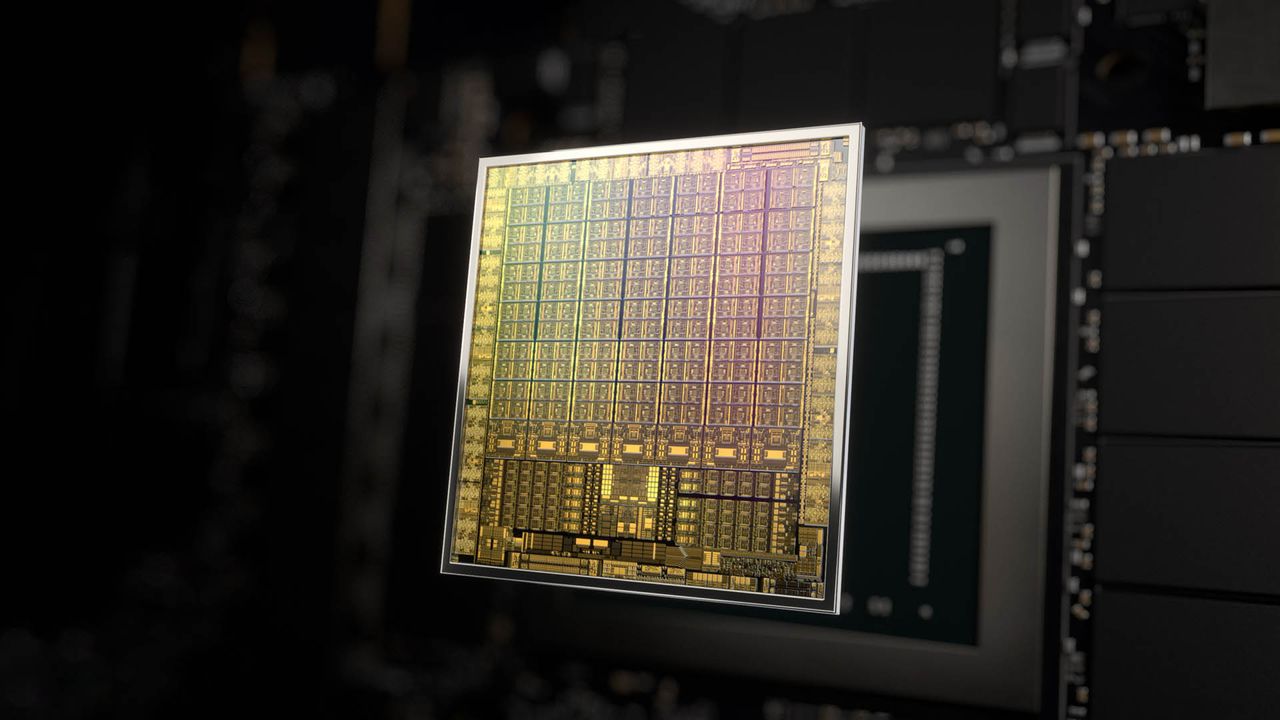

The most immediate impact of this shift appears in how Nvidia handles new manufacturing processes. When a company like TSMC introduces a new node, Nvidia must port its standard cell library to match the physical changes. This library contains roughly 2,500 to 3,000 pre-designed logic gates and interconnections that serve as the building blocks for any new GPU architecture.

Bill Dally explained the old reality: "Every time we have a new semiconductor process, we have to port our standard cell library to it... It used to take a team of eight people about 10 months, so 80 person months." That labor-intensive timeline has been obliterated by NVCell, an AI program based on reinforcement learning. Now running as version 2 or 3, NVCell completes the porting process overnight on a single GPU with results that "match or exceed the human" design in terms of size, power dissipation, and delay.

The efficiency gains are not just about speed; they often yield superior engineering outcomes:

- NVCell produces cell designs that are 20 to 30% better than those created by humans for specific metrics.

- The AI minimizes power consumption while maintaining or improving signal delay times.

- It reduces the reliance on large teams, freeing up human engineers for higher-level architectural challenges.

Revolutionizing Verification and Chip Architecture

Beyond library porting, Nvidia is deploying AI agents to solve some of the industry's oldest problems in computer design. One specific area where AI is making waves is the "F model," which serves as an executable representation of the GPU right before tape-out. The primary bottleneck in this phase has traditionally been design verification, a process that consumes immense time and resources to prove the chip will work correctly when manufactured.

To address this, Nvidia utilizes prefix RL, a reinforcement learning program applied to the age-old challenge of placing lookahead stages in Carry-lookahead chains. Dally described this as an approach where the AI tackles the problem "like it was an Atari video game," focusing on creating adders that are barely fast enough but significantly smaller and more power-efficient.

The results have been surprising, leading to design patterns that humans would never conceive:

- The RL program generates "totally bizarre designs" that defy traditional engineering intuition.

- These unconventional layouts outperform human-optimized versions by a significant margin in timing and area efficiency.

- The AI prioritizes meeting specific timing constraints rather than simply chasing maximum speed, resulting in more balanced chip performance.

Internal Knowledge Bases: ChipNeMo and BugNeMo

Not all of Nvidia's AI integration is about raw speed or exploration; some tools are designed to democratize knowledge within the company itself. Dally detailed how they took generic Large Language Models (LLMs) like ChipNeMo and BugNeMo and fine-tuned them using proprietary design documents, RTL hardware code for every GPU ever made, and internal architecture specifications. This creates a highly specialized AI model that understands the nuances of Nvidia's own ecosystem better than any external tool could.

This "GPUgpt" serves as a powerful mentorship tool, particularly for junior designers who might otherwise spend hours waiting for senior engineers to explain basic concepts like texture units. By asking ChipNeMo detailed questions about hardware design, new employees can get instant, comprehensive answers without bottlenecking senior staff. However, the reliance on such an AI does introduce risks; if the model hallucinates and suggests a texture unit improves polygon feel in the kitchen rather than rendering performance, the resulting RTX 6090 could face catastrophic failures.

Ultimately, Nvidia's approach demonstrates that AI is no longer just a feature of their products but the very engine driving their design philosophy. By leveraging reinforcement learning for physical layout and specialized LLMs for knowledge transfer, the company continues to push the boundaries of what is possible in semiconductor engineering.