Google’s Android Show: The Roadmap to AI-First Computing

Google’s recent Android Show I/O Edition delivered a sweeping roadmap that fundamentally redefines how users interact with their devices and the surrounding ecosystem. Spanning over 20 major announcements, the event highlighted Google’s aggressive intent to weave Gemini intelligence into every layer of the Android experience, signaling a shift from reactive interfaces to proactive, context-aware computing.

This isn't just about incremental updates; it’s about a complete architectural shift in how the platform operates, from hardware partnerships to vibe-coded widgets and agentic AI capabilities.

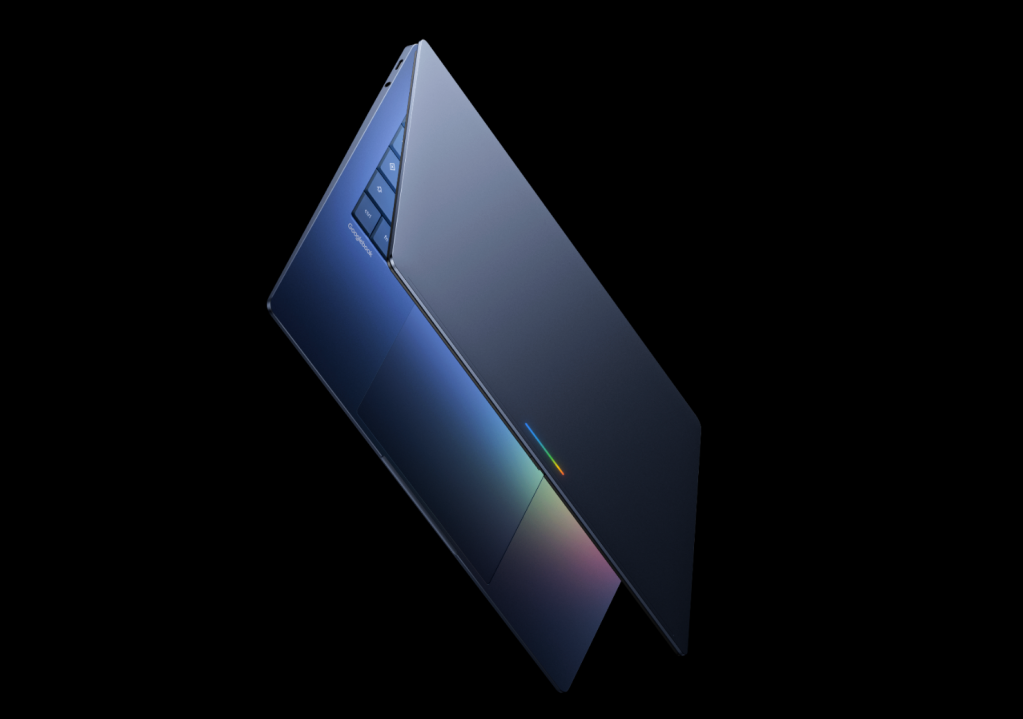

The Rise of Googlebooks and AI-Native Hardware

A cornerstone of this new era is Googlebooks, a new category of computing launching this fall in partnership with Acer, Asus, Dell, HP, and Lenovo. These are the first laptop lines built from the ground up around Gemini AI, moving beyond traditional PC paradigms.

The core differentiator is Magic Pointer, a next-generation cursor that integrates Gemini for predictive input. This feature enables:

- Cross-device continuity, allowing seamless handoff between the laptop and Android phones.

- Custom widget creation directly from natural language prompts.

- Automated note-taking and smart scheduling during multitasking scenarios.

Early adopters can expect these devices to offer context-aware assistance that anticipates user needs before they are explicitly stated, marking a significant departure from standard Windows or macOS workflows.

Vibe-Coded Widgets and Natural Language UI

Google is pushing toward frictionless personalization with Create My Widget, a feature that enables "vibe-coding" via natural language. Users can simply describe a desired dashboard, and the system will generate layout options ready for customization.

This functionality will debut on Samsung Galaxy and Pixel devices this summer, emphasizing Google's strategy to make interface design accessible to non-developers. By lowering the barrier to entry for custom UI elements, Google aims to make every Android device feel uniquely tailored to the user’s specific habits and preferences.

Android Auto: Edge-to-Edge Intelligence

The Android Auto experience is undergoing a massive redesign to support edge-to-edge intelligence. The new UI accommodates ultrawide, circular, and custom aspect ratios, ensuring that critical information from widgets is displayed without compromising navigation visibility.

Key updates include:

- Interface overhauls for media apps like YouTube Music and Spotify, optimized specifically for in-car use.

- Standardized 60fps playback on supported vehicle systems from manufacturers like BMW, Ford, and Hyundai.

- Gemini integration in Chrome, which extends conversational capabilities to web pages, allowing for summarization and contextual queries directly within the mobile browser.

Creator Tools and 3D Emoji Evolution

For content creators, Android is introducing Screen Reactions, a feature that records users alongside their device activity. This enables short-form video formats similar to TikTok or Reels natively on Android, streamlining the creation process.

Additionally, Meta integration brings Ultra HDR, native stabilization, and optimized capture pipelines to Instagram-inspired features exclusively on Android. Photo editing tools in the Edit app now include "smart enhance" and "sound separation," boosting quality without the need for third-party plugins.

On the aesthetic front, all 4,000 Android emojis are being refreshed. The redesign moves away from flat icons toward richer, more realistic visuals, aiming to capture nuance in tone and emotion that aligns with modern communication habits.

Gemini’s Agentic Capabilities and Smart Input

Perhaps the most profound shift is the introduction of agentic capabilities for Gemini. Under this framework, apps exchange contextual data to automate complex tasks. For example, a photo of an event flyer can automatically trigger travel searches, while grocery lists can auto-populate carts in preferred shopping apps.

This context-aware automation operates across the OS without compromising privacy, adhering to strict default settings. Complementing this is Rambler on Gboard, which refines speech-to-text by removing filler words ("ums," "ahs") and resolving temporal ambiguities, such as correcting "Let's meet at 3 PM... um, 2 PM" into precise output.

Expanded Ecosystem and Security Enhancements

Google is also breaking down silos with Quick Share. The service is expanding beyond Pixel devices to include Samsung, Oppo, OnePlus, Vivo, Xiaomi, and Honor, utilizing QR-based cross-platform sharing for incompatible devices. Future updates plan to integrate native app support for Quick Share within platforms like WhatsApp.

For device management, a streamlined iOS-to-Android transfer tool will import passwords, photos, messages, favorite apps, contacts, eSIM profiles, and homescreen layouts across Samsung and Pixel devices this year.

Security remains a priority with Enhanced Default Theft Protection rolling out globally on Android 17 devices. This includes Remote Lock, Theft Detection Lock, extended PIN attempts, and law enforcement access to IMEI for rapid recovery. Additionally, Intrusion Logging supports advanced investigation on Pixel devices with Advanced Protection Mode enabled.

What This Means for Android Users

Google’s Android Show reimagines the platform as an intelligent, adaptive environment where AI handles routine decisions while giving users precise control over outputs. The convergence of hardware innovation, such as Googlebooks, with conversational UIs and agentic AI suggests that Android is evolving into the central nervous system for daily digital life.

As Gemini intelligence permeates apps, widgets, and automation, developers gain powerful tools to build richer experiences, while users enjoy greater personalization without sacrificing agency. This comprehensive overhaul positions Android not just as a mobile operating system, but as the foundational layer for the next generation of personal computing.