Adobe has officially transformed Creative Cloud from a collection of static software tools into an intelligent orchestrator of creative intent. With the introduction of the Adobe Firefly AI assistant, the gap between human vision and automated execution is closing. By navigating seamlessly between Photoshop, Premiere Pro, Illustrator, and Acrobat, this new agent allows users to execute complex workflows using simple natural language commands.

This evolution marks a significant shift in the industry, moving away from "click-heavy" interfaces. Instead of focusing on the manual "how," creators can now define the "what," letting the Adobe Firefly AI assistant handle the technical execution.

How Adobe’s New Firefly AI Assistant Orchestrates Workflows

The defining feature of this new system is its level of agency. Unlike previous integrations that focused on generating single assets within a single tool, this assistant—formerly known as "Project Moonlight"—operates across the entire ecosystem simultaneously.

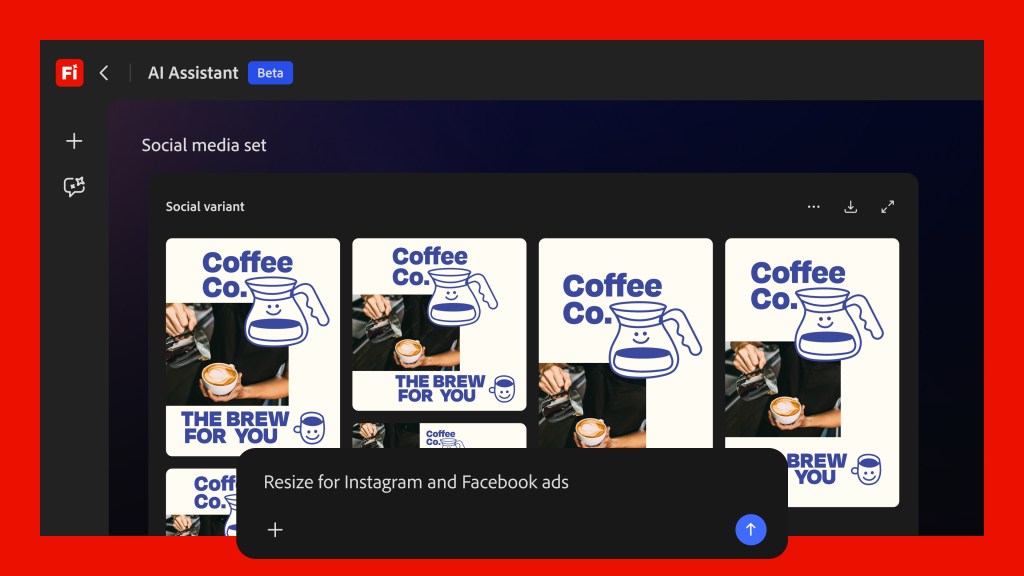

When a user provides a command like, "adapt these product photos for Instagram Stories and LinkedIn," the agent manages the entire chain of events. It intelligently coordinates actions across multiple applications to complete the task:

- Photoshop: Automatically crops or expands assets to fit different aspect ratios.

- Adobe Express: Optimizes file sizes for web and social use.

- Lightroom: Adjusts color profiles to ensure brand consistency.

- File Management: Saves final outputs directly to designated folders.

By unifying these disparate applications under one intelligent layer, Adobe is effectively solving the persistent issue of technical friction and constant context switching.

Intelligent Skills and Contextual Control

Adobe’s strategy relies heavily on "skills"—pre-defined, multi-step workflows designed to reduce cognitive load. These templates automate complex projects, such as fragmenting a single piece of content for various digital platforms with just one prompt. To ensure precision, the Adobe Firefly AI assistant utilizes a feedback loop where it learns from user corrections, gradually adapting to specific aesthetic preferences and individual workflow habits.

The interface also introduces dynamic, context-sensitive controls. For example, if you are editing a photo of a forest, the assistant might present a specialized slider to adjust foliage density, eliminating the need for manual masking or tedious layer adjustments.

Key Capabilities of the New System

- Cross-application orchestration: Seamlessly moving assets between Photoshop, Illustrator, Premiere Pro, Lightroom, and Acrobat without manual export/import steps.

- Dynamic UI controls: Context-sensitive buttons and sliders that appear based on project content for granular control.

- Skill-based automation: Pre-built workflows for tasks like social media adaptation or document summarization in Acrobat.

- Human-in-the-loop flexibility: Ensuring users can always interject, modify parameters, or override AI decisions at any stage.

Looking ahead, Adobe is exploring integrations with third-party large language models (LLMs) to further enhance the agent's reasoning capabilities. Significant updates are also on the horizon for the Firefly video editor, including advanced speech noise reduction, audio adjustment tools, and deeper integration with Adobe Stock.