Google Targets Bad Ads Over Bad Actors with AI Precision

The moment a user’s cursor hovers over a suspicious link promising an impossible crypto windfall, Google’s Gemini models have already scanned the ad creative, cross-referenced it against millions of known scam patterns, and silently severed the connection before a single impression is served. This invisible shield represents a fundamental shift in how the search giant polices its digital marketplace: no longer relying on blunt bans after the damage is done, but deploying artificial intelligence to intercept bad ads at their source. The Google 2025 Ads Safety Report reveals this stark new reality of evolution, where the focus has moved from punishing bad actors to neutralizing the malicious content they deploy.

Granular Precision: Shifting Focus from Punishment to Prevention

The traditional model of ad safety relied heavily on reactive measures: waiting for user reports or post-hoc audits to identify bad actors, then suspending their accounts in mass. This approach often resulted in collateral damage, where legitimate businesses were caught up in sweeping bans due to algorithmic overreach or mistaken identity. Google’s new strategy, championed by Keerat Sharma, VP and general manager of ads privacy and safety, moves enforcement down to the creative level rather than targeting individuals broadly.

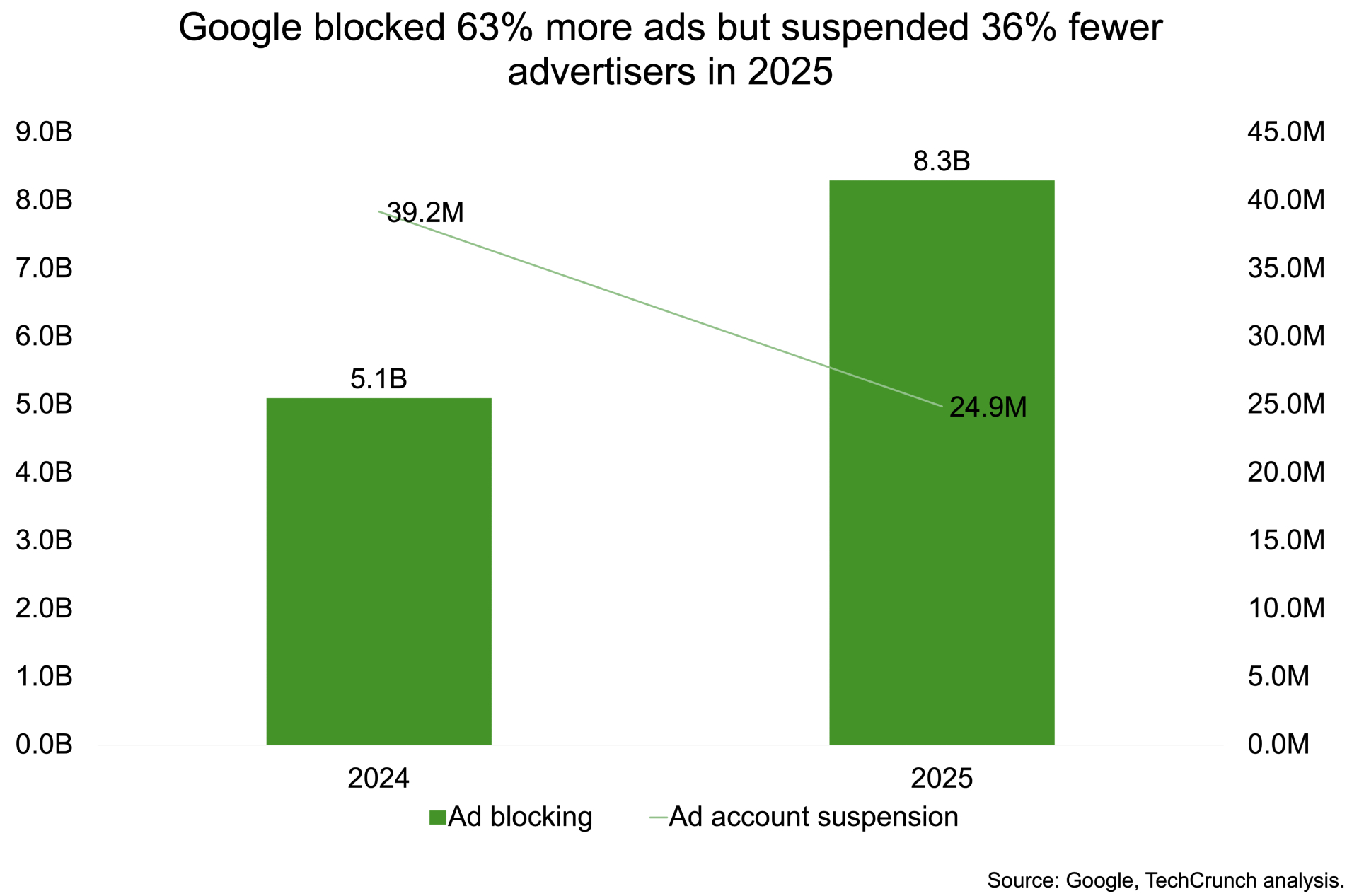

By analyzing individual ad elements—images, text, landing page behavior—rather than just advertiser profiles, Google can isolate malicious campaigns without decimating an entire account. This shift has yielded significant results in operational efficiency:

- Incorrect suspensions have dropped by 80% year over year, preserving revenue for legitimate businesses caught in the crossfire.

- The system now operates on a "layered defense" model, combining advertiser verification with real-time AI scanning to stop bad actors from creating accounts in the first place.

- The AI detects patterns across large campaigns, identifying subtle variations used by scammers to evade static detection rules.

The rise in blocked ads also reflects the adaptive nature of modern fraudsters. As generative AI becomes a tool for scammers to produce deceptive content at scale, Google has had to counter with equally sophisticated technology. Gemini models are now trained to spot these synthetic patterns, allowing the company to block campaigns earlier in the pipeline than ever before.

Global Hotspots and Emerging Threats on the Horizon

The enforcement landscape varies significantly by region, reflecting local regulatory pressures and the specific tactics favored by fraudsters in those markets. The United States saw over 1.7 billion ads removed and 3.3 million advertiser accounts suspended, with ad network abuse, misrepresentation, and sexual content leading the list of violations. In these mature markets, enforcement remains aggressive as scammers continue to exploit the sheer volume of traffic.

However, the most dramatic shifts are occurring in emerging markets where digital adoption is accelerating faster than regulatory frameworks can adapt. India, Google’s largest market by user count, blocked 483.7 million ads—nearly double the previous year's figure. Despite this massive increase in takedowns, account suspensions fell from 2.9 million to 1.7 million, highlighting the success of the granular approach even at scale. Top violations in India included trademark infringement, financial services fraud, and copyright issues, pointing to a specific wave of scams targeting local economic anxieties.

The data suggests that while bad actors are becoming more sophisticated, Google’s AI is keeping pace:

- Scam-related content accounts for 602 million of the blocked ads globally.

- The total number of advertiser accounts linked to scams stands at 4 million, a figure that remains manageable compared to the billions of individual ads intercepted.

- Real-time response capabilities allow Google to adapt to emerging threats without waiting for quarterly reviews or manual audits.

A Future Built on Prevention Over Punishment

The trajectory is clear: Google is no longer viewing ad safety as a policing exercise but as an architectural feature of its platform. The goal is not merely to remove bad content, but to prevent it from ever becoming visible in the first place. This proactive stance relies heavily on the continuous training of Gemini models against an evolving threat landscape where scammers use AI to mimic legitimate advertising at a fraction of the cost.

While the numbers indicate success, the arms race is far from over. As Google rolls out new defenses, bad actors will inevitably adapt their tactics, leading to fluctuations in both blocked ads and suspensions. However, the structural shift toward granular enforcement suggests that future updates will focus less on account-wide bans and more on isolating specific malicious elements within a campaign. For advertisers, this means a safer ecosystem with fewer false positives; for users, it promises a browsing experience increasingly free from the deceptive content that has long plagued digital advertising. The era of reactive banning is ending, replaced by an automated, AI-driven firewall designed to stop harm before it happens.