Does the boundary between parental guidance and digital surveillance vanish when an artificial intelligence becomes a reporting tool for the household? Meta’s latest expansion of its supervision suite suggests that, for many families, that line is being intentionally blurred. The social media giant has announced a significant update where Meta will now allow parents to see the topics their child discussed with Meta AI across Facebook, Messenger, and Instagram.

New Features for Meta AI Parental Oversight

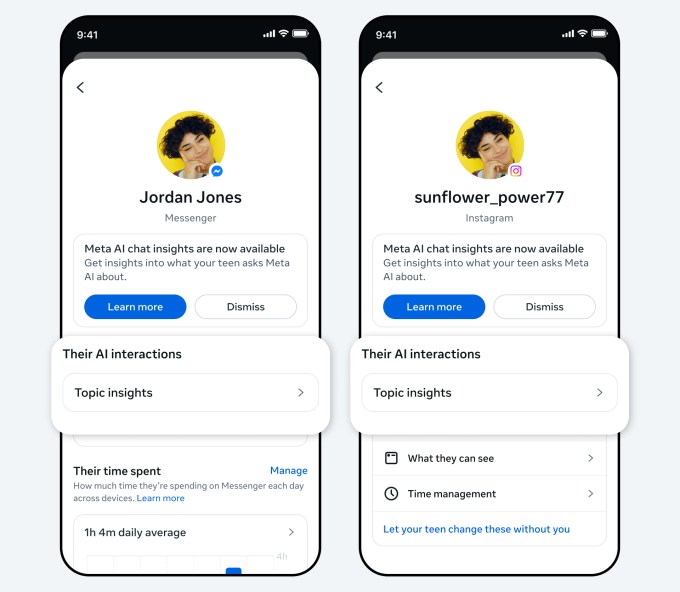

The core of this update lies in a newly introduced "Insights" tab within Meta’s existing supervision hub. Rather than providing a verbatim transcript of every interaction—a move that would likely trigger massive privacy backlash—Meta is opting for a high-level summary of engagement. This feature allows parents to review the specific themes their teen has explored with the Meta AI chatbot over the past week.

The granularity of this data is designed to offer context without total exposure. For example, a parent might see that a child has been inquiring about "Lifestyle," which can then be expanded into subcategories like fashion, food, or holidays. This tiered approach allows for a layer of abstraction, providing enough information to flag potential concerns while attempting to maintain a semblance of the teen's digital autonomy.

The rollout is currently concentrated in specific geographic regions:

- United States

- United Kingdom

- Australia

- Canada

- Brazil

However, Meta has indicated that a global deployment is expected in the coming weeks, signaling a standardized approach to Meta AI parental controls across its entire ecosystem.

A Strategic Pivot Toward Safety and Liability

This move does not exist in a vacuum; it is a calculated response to an increasingly hostile legal landscape for Big Tech. The timing of these features follows significant pressure regarding child safety on Meta's platforms. Most notably, the company recently faced a landmark case in New Mexico, where it was held legally liable for failing to protect minors—a verdict that sets a precarious precedent for the industry.

In the wake of such legal scrutiny, Meta has taken several preemptive steps to modify its AI ecosystem:

- Suspension of AI Characters: In January, Meta globally disabled access to its interactive AI personas, such as those modeled after Snoop Dogg and Paris Hilton, for teenage users.

- Topic-Based Monitoring: Shifting the focus from personified interaction to subject-based observation via the new "Insights" tab.

- Guided Dialogue: The introduction of suggested "conversation starters" designed to help parents initiate non-judgmental discussions about AI usage.

- Expert Oversight: The establishment of an AI Wellbeing Expert Council to guide the development of age-appropriate generative tools.

By pivoting from interactive, character-driven engagement to a more structured, topic-based model, Meta is attempting to mitigate the risks of unpredictable AI behavior while simultaneously offering parents the "proof" of safety they demand.

The Future of Algorithmic Transparency

The implementation of these tools highlights the growing tension between user privacy and safety compliance. While Meta frames this as a way to empower parents, critics often point out that such features can erode the trust necessary for healthy adolescent development. If every query about "Health and Wellbeing" or "Mental Health" is visible to a guardian, the AI ceases to be a private sounding board and instead becomes an extension of the surveillance state within the home.

As generative AI becomes more deeply integrated into the social fabric, the industry must decide whether the goal is to create a transparent window for authority or a safe space for exploration. Meta’s current trajectory favors the former. The success of this rollout will depend on whether parents find these Meta AI insights genuinely useful or merely an intrusive layer of metadata that complicates the very relationship it seeks to protect.