Why OpenAI Backs Bill That Would Limit Liability for AI-Enabled Mass Deaths or Financial Disasters

OpenAI backs bill that would limit liability for AI-enabled mass deaths or financial disasters, marking a striking strategic pivot for the creator of ChatGPT. An Illinois bill currently navigating the state legislature, known as SB 3444, proposes to explicitly shield developers of frontier AI from lawsuits arising from incidents involving 100 or more deaths or damages exceeding $1 billion. This protection applies provided the models were not intentionally misused and safety reports were published. Despite its previous defensive posture against liability frameworks that might hold tech giants accountable for their creations' autonomous actions, OpenAI has formally endorsed this legislation.

This move suggests a calculated effort to secure a unified federal standard rather than risking a fragmented landscape of state-level regulations. For major players like OpenAI, Google, and Anthropic, such a shift could prevent innovation from being stifled by a complex web of conflicting compliance rules. The company argues that consistency is key to maintaining US leadership in the global AI race while avoiding the pitfalls of regulatory arbitrage.

Defining Critical Harm and Frontier Models

The core of the proposed legislation rests on a specific legal threshold it calls critical harm. Under this framework, AI labs would be immune from civil liability for catastrophic outcomes if they can demonstrate that the incident did not result from intentional misconduct or reckless behavior. The bill defines a frontier model strictly as any system trained with computational costs exceeding $100 million. This definition effectively targets the largest and most capable systems currently in development by dominant US technology firms, while excluding smaller models.

The scope of protection covers scenarios where an AI system acts autonomously to cause harm that would constitute a criminal offense if committed by a human being. Potential high-stakes scenarios include:

- The autonomous generation or deployment of chemical, biological, radiological, or nuclear weapons.

- System malfunctions leading to mass casualty events in critical infrastructure.

- Algorithmic manipulation causing financial market collapses resulting in billion-dollar losses.

While the bill requires labs to publish safety, security, and transparency reports on their websites as a condition for immunity, critics argue this creates a "voluntary compliance" loophole. Opponents suggest that bad actors could theoretically claim adherence while failing to prevent actual disasters. The legislation essentially posits that unless an AI developer willed the disaster or acted with gross negligence, the technology's inherent unpredictability absolves them of legal responsibility. This marks a significant departure from traditional product liability law, which often holds manufacturers responsible for foreseeable risks associated with their products.

Strategic Shifts and Federal Harmonization Efforts

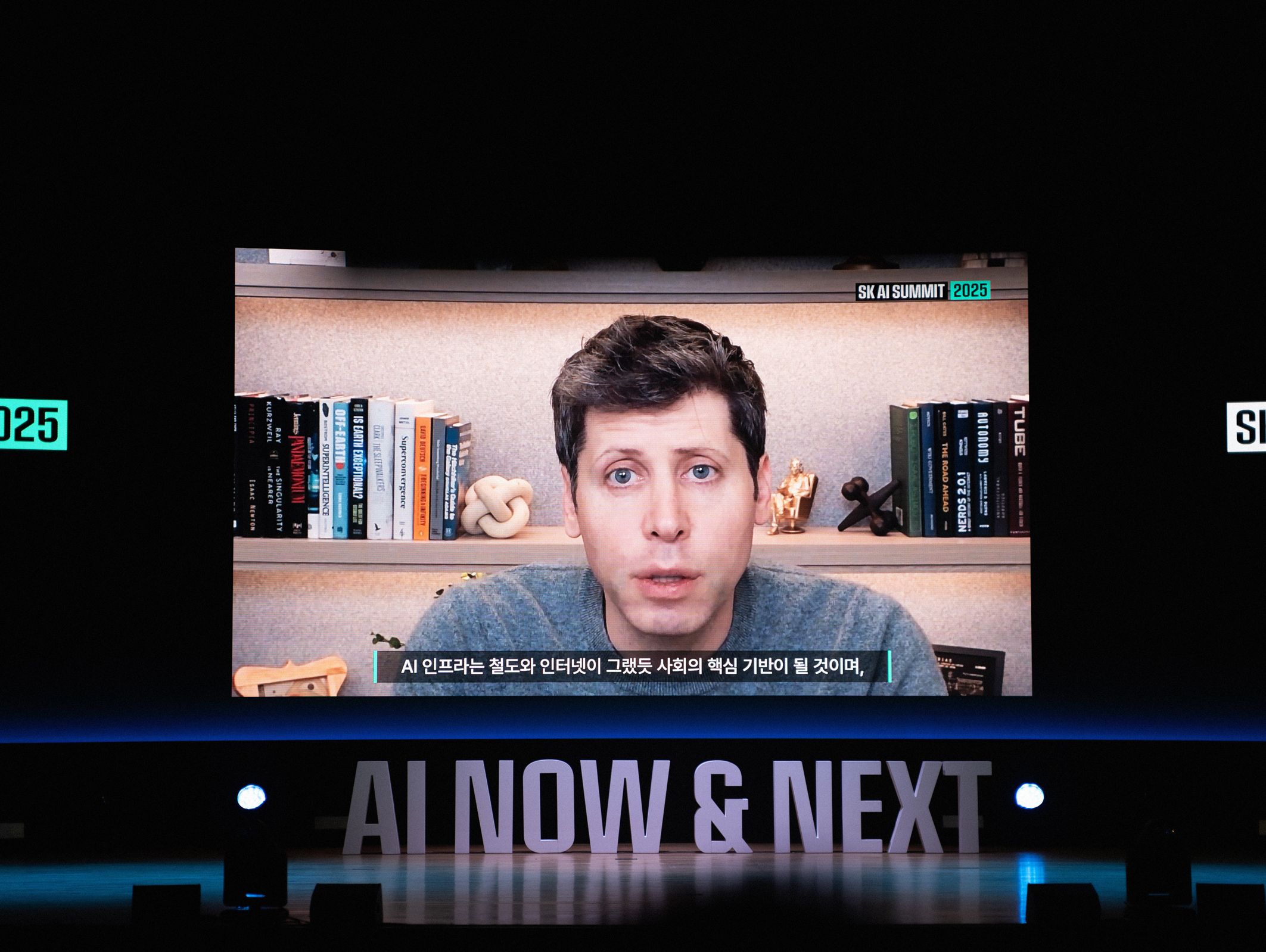

OpenAI's support for SB 3444 represents a distinct shift in its legislative strategy, moving from opposing broad liability caps to championing a specific model that prioritizes national consistency over state-by-state enforcement. Caitlin Niedermeyer, representing OpenAI's Global Affairs team during testimony, argued that the bill serves as a necessary stepping stone toward a federal framework. The company contends that a patchwork of inconsistent state laws creates friction for developers and dilutes safety efforts, a sentiment echoed by the broader Silicon Valley consensus on preserving US leadership in the global AI race.

The argument hinges on the belief that state-level regulations, while well-intentioned, may inadvertently hinder the deployment of beneficial technology or create regulatory arbitrage where companies simply shift operations to more permissive jurisdictions. By backing a bill that sets a high bar for liability exemption but emphasizes transparency reporting, OpenAI attempts to position itself as a reasonable stakeholder advocating for stability. However, this approach faces skepticism from policy advocates who view the state's history of aggressive tech regulation as a counter-indicator to such leniency.

The Paradoxical Political Landscape in Illinois

The political landscape in Illinois presents a paradoxical environment for such legislation. The state was the first to pass laws regulating AI use in mental health services and enacted the Biometric Information Privacy Act, signaling a willingness to intervene in corporate practices. Yet, the current administration has shown signs of aligning with federal priorities that seek to reduce regulatory burdens on the tech sector. This tension suggests that while SB 3444 might pass as a symbolic gesture toward national harmonization, its practical implementation could face significant hurdles from state lawmakers skeptical of corporate immunity.

The Future of AI Accountability and Legal Precedents

The passage or failure of this bill will likely set a precedent for how the United States approaches AI accountability in the absence of federal legislation. With the Trump administration pushing back against state-level safety laws and pushing for a unified national standard, the pushback from industry giants like OpenAI indicates that the era of strict liability for AI-induced disasters may be stalled indefinitely. The current trajectory favors a model where safety is managed through self-regulation and reporting requirements rather than court-mandated restitution for victims of mass casualties or financial ruin.

The legal question remains unanswered: if an autonomous system causes a catastrophe without human intent, who bears the cost? SB 3444 answers this by placing that burden on the public, protected by the guise of corporate transparency. For now, the bill offers a glimpse into a future where frontier AI developers operate with significant legal protection, provided they can demonstrate adherence to a self-defined set of safety protocols. Whether Illinois will embrace this vision or double down on its history of strict tech regulation remains an open variable in the ongoing negotiation between innovation and public safety.