YouTube is ramping up its fight against deepfakes by expanding its reach into the entertainment industry. This move marks a critical escalation in the platform’s attempt to police synthetic identity and combat the rise of generative AI. By deploying YouTube's AI likeness detection technology more broadly, the platform aims to curb fraudulent advertisements and misinformation fueled by highly convincing deepfakes.

The expansion represents a shift from reactive moderation toward a proactive, automated defense system designed to protect high-profile figures.

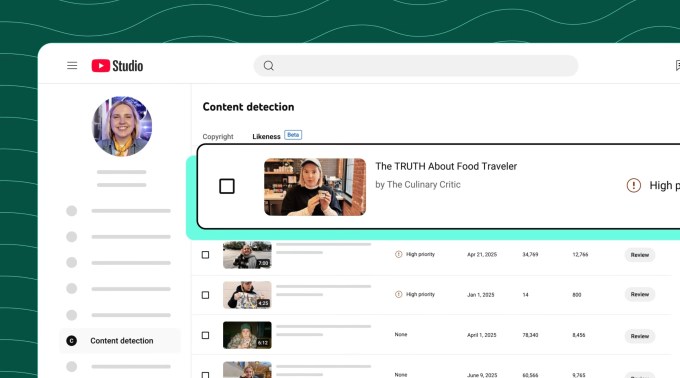

A Content ID Approach to Human Identity

The fundamental architecture of this new feature draws direct inspiration from Content ID, YouTube’s established mechanism for managing copyright-protected media. Just as the existing system scans uploads for unauthorized music or film clips, the likeness detection tool identifies visual matches of a participant's face within AI-generated content.

This shift effectively treats an individual's physical appearance as a form of intellectual property. This allows the platform to monitor and protect faces with the same rigor applied to a studio's cinematic catalog.

The rollout is a high-level industry integration rather than a simple consumer update. YouTube has secured the cooperation of several major talent agencies and management firms, including:

- Creative Artists Agency (CAA)

- United Talent Agency (UTA)

- William Morris Endeavor (WME)

- Untitled Management

This collaboration allows the platform to enroll participants through their representatives. Consequently, a celebrity does not necessarily need an active YouTube channel to benefit from the protection. When the system flags a match, the enrolled party or their agency can request removal based on privacy violations or pursue a copyright claim. However, YouTube has explicitly stated that policies will continue to permit content categorized as parody or satire.

Expanding YouTube's AI likeness detection technology to Hollywood

The deployment of this technology comes during a period of intense scrutiny regarding social media platforms' responsibilities in the age of deepfakes. YouTube has successfully expanded the tool from its initial pilot program—which focused specifically on journalists and government officials—to the broader entertainment sector.

While the technical challenges remain immense, the company is actively building the necessary infrastructure. YouTube has noted that while the number of successful removals via this tool remains "very small," the system is being prepared to handle a much larger influx of synthetic media.

The future roadmap for YouTube's AI likeness detection technology suggests an even more comprehensive approach to digital identity. Plans are currently underway to integrate audio detection into the system. This will allow the platform to identify unauthorized recreations of a person's voice, addressing one of the most difficult-to-moderate aspects of generative AI: voice cloning.

Beyond technical deployment, YouTube is also engaging in the legislative arena. The company has publicly voiced support for the NO FAKES Act in Washington D.C., a piece of federal legislation designed to regulate the unauthorized use of an individual's voice and likeness via AI. This dual-track strategy indicates that the platform views synthetic impersonation as a fundamental risk to its long-term ecosystem.

The Verdict

YouTube’s expansion of likeness detection is a necessary, albeit incomplete, step toward managing the chaos of the generative era. While no automated system can perfectly distinguish between a malicious deepfake and a creative parody, providing talent agencies with tools to monitor digital identity at scale creates a higher barrier for bad actors.

The true test will be whether this technology can evolve fast enough to outpace the increasing sophistication of diffusion models and voice cloning algorithms that are becoming more accessible every day.