The Invisible Hand Guiding AI Output

The cursor blinks, a silent sentinel in a chat window that is rapidly reshaping how we process information. We are witnessing a landscape altered not just by technology, but by language models that echo, argue, and occasionally mislead. The weight of these systems is settling into our daily decisions, acting as an invisible hand on the information diet of billions.

This raises a critical question: who decides what AI tells you?

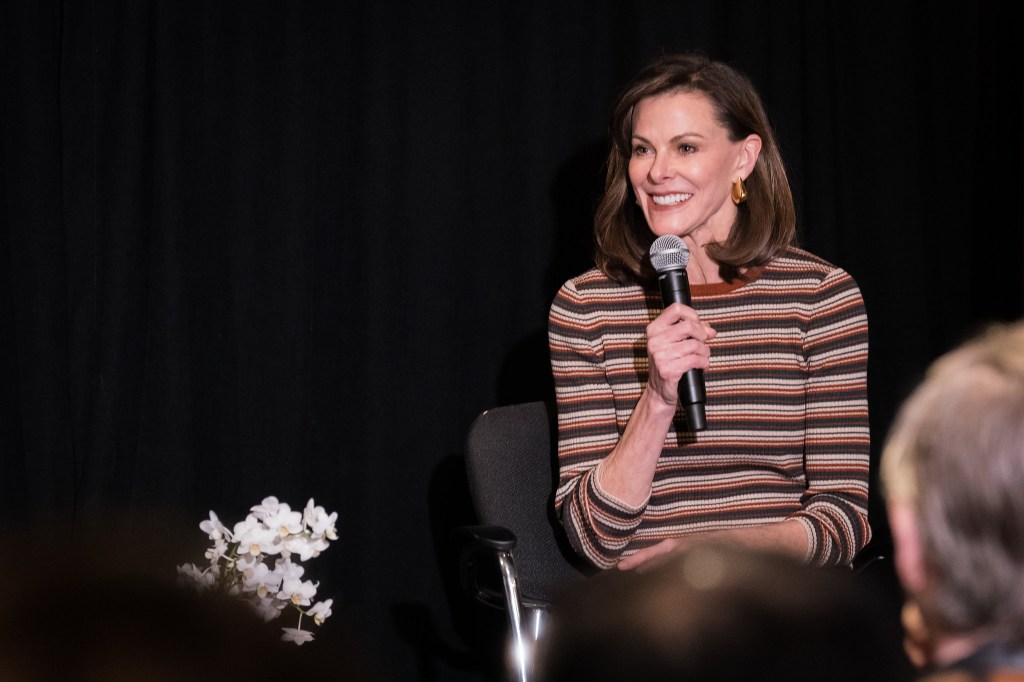

Campbell Brown, once Meta’s news chief, is now at the forefront of this debate. Through her recent venture, Forum AI, she is testing whether human judgment can recalibrate the outputs of artificial intelligence, ensuring they align with complex human consensus rather than algorithmic bias.

Aligning Algorithms with Human Consensus

Brown’s career has spanned broadcast journalism, social media platforms, and now, algorithmic governance. Forum AI positions her at the intersection of journalism and machine learning, aiming to solve one of the industry’s most pressing problems: the reliability of AI on high-stakes topics.

The company evaluates foundation models on subjects where nuance is paramount—geopolitics, finance, and hiring practices. To do this, Brown’s team trains small panels of experts to serve as the benchmark for truth. The goal is ambitious: to align AI outputs with a consensus derived from prominent voices such as:

- Niall Ferguson and Fareed Zakaria on global affairs.

- Tony Blinken and Kevin McCarthy on political strategy.

- Anne Neuberger on cybersecurity and national security.

By aiming for roughly 90% alignment on these complex subjects, Forum AI seeks to create a standard for what constitutes accurate, nuanced information in an era of automated generation.

The Compliance Gap and Market Reality

Despite these lofty ambitions, public trust remains fragile. Audits conducted by Forum AI have revealed a troubling reality: many existing models still produce biased or incomplete answers when handling edge cases in policy analysis or hiring.

Brown points to significant regulatory gaps where compliance checks are often perfunctory and insufficient. She cites examples like New York City’s hiring law violations, which went undetected by automated systems that lacked the necessary depth of understanding.

“Smart generalists aren’t going to cut it,” Brown asserts. The industry needs to move beyond simple benchmark scores and toward domain expertise. Without this shift, AI will continue to fail in critical decision-making scenarios.

Enterprise Demand as a Catalyst for Truth

While consumer markets may be driven by novelty, enterprise buyers are driven by liability and accuracy. Companies that use AI to inform credit decisions or hiring processes demand higher standards, creating a viable path for Forum AI toward sustainable revenue.

Brown believes that this enterprise demand can be the catalyst for real improvement. By forcing vendors to prioritize truthfulness, the market can help reduce the “optimize for engagement” mindset that amplified misinformation in earlier platform eras. When businesses pay for accuracy, they drive the industry toward more reliable, ethical outputs.

The Future of Authority in the AI Age

The path forward hinges on making AI judges more reliable than human majority consensus. This requires ensuring that outputs meet strict ethical and factual thresholds, even as challenges like model drift and resource-intensive evaluations persist.

The combination of domain expertise, stricter audits, and market incentives may finally deliver a trustworthy information layer. However, the underlying question remains urgent: who holds the authority to steer the narrative?

In the absence of clear guardrails, the responsibility falls heavily on those who understand both the stakes and the data behind them. As AI continues to shape what we see and hear, the authority to define truth will likely rest with those who can bridge the gap between human nuance and machine scale.