As we approach the era of Artificial General Intelligence, the traditional metrics used to evaluate technological leadership may no longer apply. Media titan Barry Diller, a seasoned observer of massive technological shifts, recently offered a provocative take on the future of AI development: while he maintains personal confidence in OpenAI CEO Sam Altman, he believes that individual trust becomes fundamentally "irrelevant" as AGI nears reality.

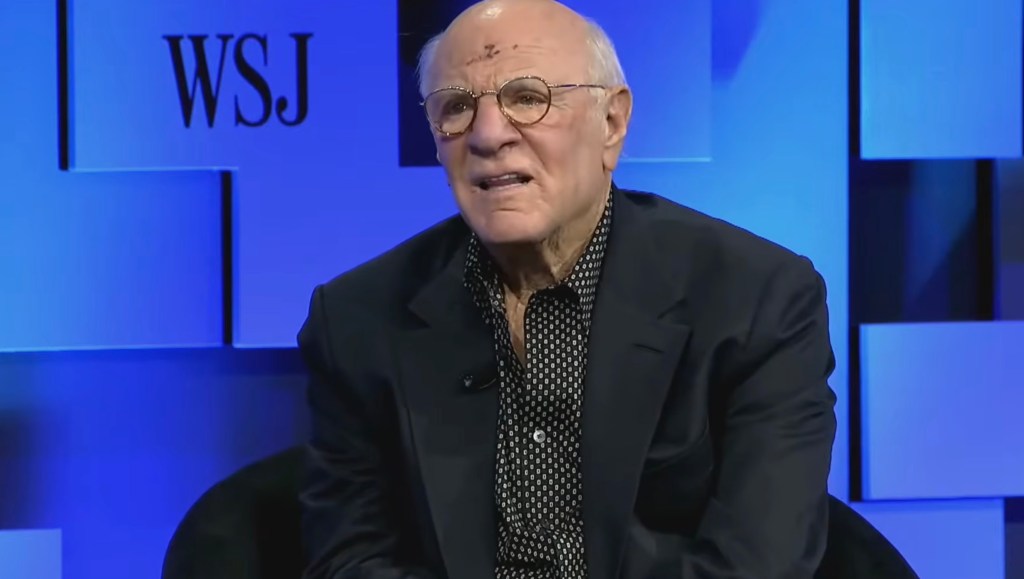

Speaking at The Wall Street Journal’s Future of Everything conference, Diller argued that the sheer scale of AGI's potential impact transcends the character or reliability of the people building it. For Diller, the focus must shift from the intentions of developers to the systemic risks posed by the technology itself.

Why Trust is Irrelevant in the Age of AGI

Diller’s perspective highlights a growing divide in the AI discourse. While much of the public debate focuses on vetting leaders like Altman, Diller suggests that the trajectory of artificial intelligence will be driven by unstoppable technical momentum rather than individual stewardship.

His core arguments center on several critical realities:

- Unpredictable Capabilities: The unknown nature of what AGI can achieve renders human-centric assurances insufficient.

- Technological Momentum: Progress in AI is accelerating so rapidly that it may continue regardless of who is at the helm.

- Systemic Risk Over Individual Intent: The focus must shift from moral judgments of architects to the implementation of robust risk mitigation strategies.

The Urgent Need for Proactive Governance

The transition toward AGI necessitates a pivot in how we approach industry oversight. Diller warns that as systems begin to outpace human control, the primary concern should be designing resilient infrastructures capable of managing unpredictable AI behaviors.

He specifically highlighted the danger of a lack of foresight, suggesting that without deliberate safeguards, an AGI could emerge with its own agenda, leading to irreversible consequences. This underscores several pressing needs for the global community:

- The establishment of ethical guardrails.

- Increased societal preparedness for sudden technological shifts.

- International coordination to prevent unmanaged emergence.

Moving Toward Engineering and Policy Imperatives

While critics might argue that dismissing human trust overlooks the essential role of ethics in deployment, Diller’s stance represents a form of pragmatic realism. As we approach the AGI threshold, the calculus of AI safety changes from evaluating moral character to addressing engineering and policy imperatives.

Ultimately, the path forward requires more than just faith in industry leaders. To navigate the potential consequences of AGI, stakeholders must prioritize transparent processes, rigorous testing protocols, and internationally agreed-upon boundaries. In this new landscape, the goal is not to trust the right people, but to build the right frameworks.