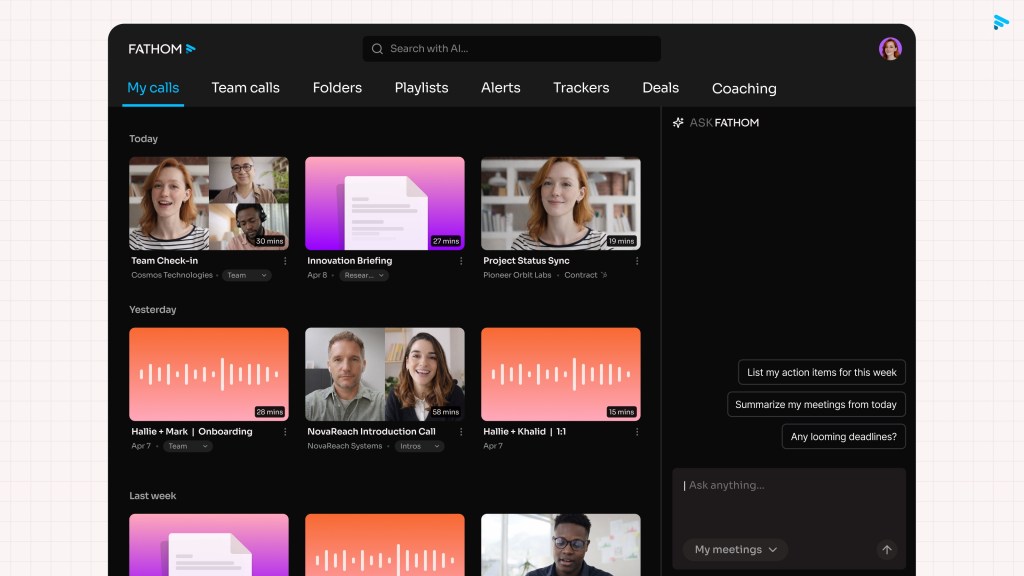

Fathom Adds Bot-Less Meeting Mode to Challenge Granola

The very tools designed to liberate professionals from note-taking duties have inadvertently created a new form of digital congestion, where every meeting room is now populated by invisible agents vying for attention. In response, Fathom adds a bot-less meeting mode in a bid to take on Granola and redefine the standards of corporate communication. This latest update strips away the need for an AI assistant to physically join calls, directly challenging the prevailing model established by competitors like Granola and Talat. By decoupling transcription from presence, Fathom offers a cleaner alternative that prioritizes user privacy and eliminates the "digital noise" of constant virtual attendance.

Escaping Digital Congestion with Local Processing

The standard workflow for modern corporate communication has become cluttered as organizations rush to adopt AI-driven productivity tools. The default behavior now shifts toward deploying a dedicated bot into every meeting to transcribe, summarize, and tag action items. While convenient, this approach introduces a layer of digital noise where participants interact with software constantly "in the room," often leading to privacy concerns and a sense of surveillance.

Fathom’s new capability cuts through this by decoupling transcription from presence. The application now runs entirely on the user's local machine or within their existing browser environment, capturing audio without registering a new attendee in the conference roster. This shift represents a fundamental change in how meeting data is harvested:

- Silent Background Recording: Instead of relying on an external service to intercept streams as they happen, Fathom allows users to record calls silently.

- No Virtual Attendee: The result is a cleaner interaction where human participants focus solely on their counterparts rather than managing a virtual entity that has joined the call.

Precision Through Advanced Speaker Diarization

A common criticism of passive recording tools is the loss of context—specifically, the inability to distinguish who said what when reviewing a transcript later. Many bot-less solutions capture the audio but output a generic wall of text, making it difficult to attribute specific insights or commitments to the correct individual. Fathom has invested significant engineering resources into refining speaker diarization, ensuring that the final transcript clearly identifies every speaker with high accuracy.

Richard White, CEO of Fathom, notes that this focus addresses a critical failure point in existing workflows: misattribution. When users query their meeting database months later to recall a specific decision or promise, traditional tools often fail to link the quote to the correct person, rendering the data less useful for accountability and memory. By getting speaker identification right, Fathom aims to transform raw audio into a structured, searchable knowledge base where context is preserved alongside the words themselves.

The company also leverages these improvements in its new AI querying system. Users can now ask natural language questions of their entire meeting history, relying on the precise attribution data to retrieve accurate answers about who said what and when. This capability is particularly valuable for teams that need to audit decisions or trace the evolution of a project discussion over time without re-watching hours of footage.

The Model Context Protocol Advantage

Beyond transcription accuracy, Fathom is positioning itself as a flexible backend for broader AI ecosystems. The latest update introduces support for the Model Context Protocol (MCP), allowing users to pull meeting data directly into their preferred AI tools and workflows. This move contrasts sharply with recent friction points in the market; Granola recently faced user backlash after altering its on-device database structure, effectively breaking existing integrations that relied on seamless transcript access.

Fathom’s approach emphasizes interoperability and stability through several key features:

- Seamless Data Extraction: Users can connect their meeting archives to external AI models without custom scripting or brittle API dependencies.

- Contextual Feeding: Businesses can feed broader organizational context into the system, enabling more nuanced AI analysis that goes beyond simple transcription.

- Future-Proof Integration: The MCP server ensures that as new tools emerge, Fathom remains a compatible data source rather than an isolated silo.

The company is also expanding its hardware footprint with plans for an iOS application capable of recording in-person meetings, further blurring the line between digital and physical collaboration spaces. This expansion suggests a long-term strategy where Fathom becomes the universal layer for meeting intelligence, regardless of whether the conversation happens on Zoom, Teams, or around a conference table.

The Path Forward: Quality Over Speed

The introduction of bot-less capabilities marks a maturation in the AI note-taking market. While early adopters prioritized ease of setup and automated summaries, the current demand is shifting toward privacy, accuracy, and integration flexibility. Fathom’s decision to prioritize speaker diarization and open protocols signals an understanding that the value lies not just in capturing words, but in structuring them for long-term utility.

As the market continues to saturate with tools promising to save time, the winners will likely be those who solve the quality of data rather than just the speed of acquisition. Fathom’s bid to overtake Granola by offering a cleaner, more accurate, and open-ended solution could redefine how organizations view their meeting infrastructure—moving from a model of constant digital intrusion to one of silent, intelligent support.