The transition from general-purpose computing to highly specialized silicon marks a fundamental shift in how the world processes intelligence. As the explosion of large language models forces a pivot toward hardware capable of massive parallelization, Google Cloud launches two new AI chips to compete with Nvidia to meet this demand. This announcement regarding eighth-generation Tensor Processing Units (TPUs) signals that customization is moving from an experimental phase into a structural reality for global cloud infrastructure.

How Google Cloud Launches Two New AI Chips to Compete with Nvidia via Specialized Silicon

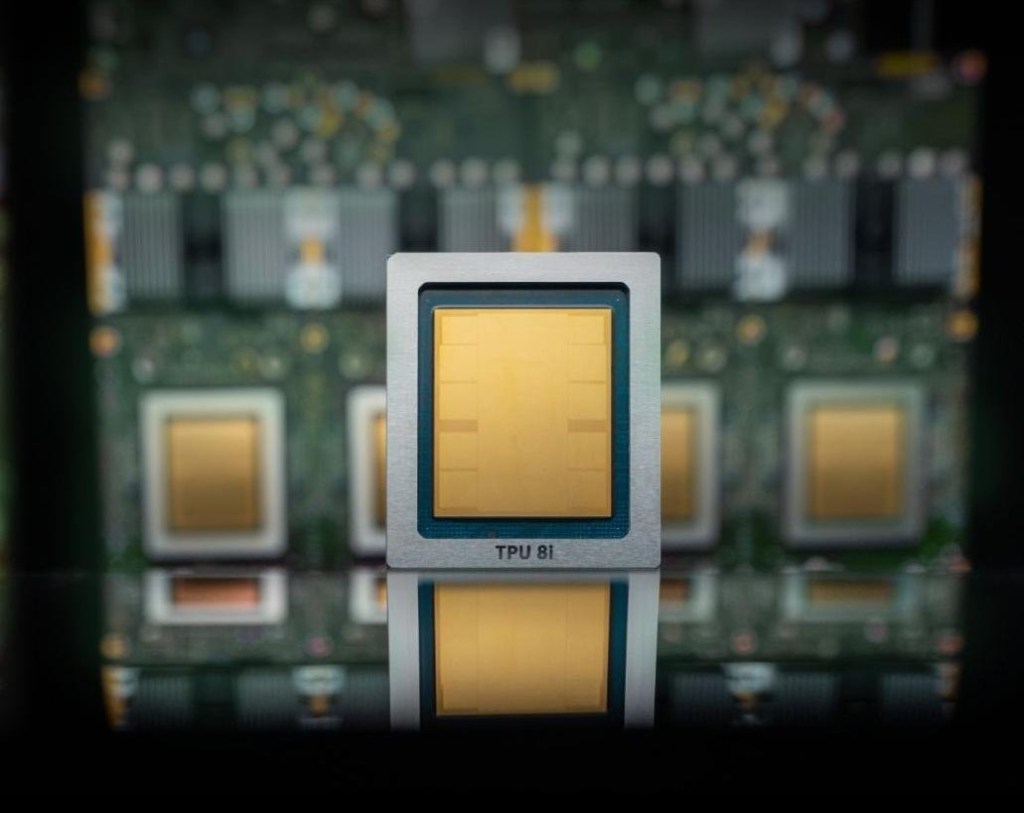

The latest iteration of Google's custom silicon breaks away from a monolithic design, splitting the architecture into two distinct paths: the TPU 8t and the TPU 8i. This bifurcation acknowledges that the lifecycle of an AI model involves vastly different computational requirements at different stages.

While the TPU 8t is optimized for the heavy lifting required during model training, the TPU 8i focuses on inference. Inference represents the ongoing usage of models, specifically the critical moment when a user submits a prompt and the hardware must generate a response. By separating these workloads, Google can optimize the power and architecture of each chip for its specific task.

The performance delta between this generation and its predecessors is significant:

- Up to 3x faster AI model training speeds compared to previous generations.

- An 80% improvement in performance per dollar, addressing the escalating costs of AI development.

- Scalability that allows for over one million TPUs to function within a single unified cluster.

- Enhanced energy efficiency designed to mitigate the massive power demands of modern data centers.

A Coexistence Strategy and the Nvidia Ecosystem

Despite these aggressive hardware advancements, Google is not attempting an immediate decapitation of Nvidia’s market dominance. The cloud giant continues to rely on Nvidia's ecosystem, promising that its infrastructure will support the upcoming Vera Rubin architecture later this year.

This strategy suggests a "both/and" approach rather than "either/or." In this model, custom silicon handles specific high-scale workloads while Nvidia remains the gold standard for broader, more versatile GPU-based tasks. This is precisely why the initiative where Google Cloud launches two new AI chips to compete with Nvidia is so vital for specialized enterprise needs.

This era of coexistence is further evidenced by Google's collaborative efforts in the networking layer. The company is working alongside Nvidia to refine Falcon, an open-source software-defined networking technology created by Google in 2023. This initiative aims to optimize how Nvidia-based systems interact within Google’s cloud infrastructure, ensuring that even when using third-party chips, the underlying network fabric remains highly efficient.

The Long-Term Economic Implications of Custom Silicon

The economic gravity of AI development is increasingly pulling toward cost-per-token and energy-per-compute metrics. As enterprises migrate their AI needs to the cloud, the ability to port applications to highly efficient, custom-built hardware becomes a competitive necessity.

If hyperscalers like Google, Amazon, and Microsoft can continue to scale their bespoke architectures, the reliance on general-purpose GPUs may slowly erode in high-density, specialized workloads. The current landscape remains dominated by Nvidia's massive market capitalization, but the blueprint for the future is being drawn in custom silicon.

Ultimately, the success of these chips will depend on how seamlessly they can integrate into an ecosystem that is still, for now, fundamentally built around Nvidia's architecture. As training requirements grow exponentially, the industry will likely move toward a more fragmented, yet highly efficient, landscape of specialized chips.