Why the Claim That AI is Conscious Falls Apart

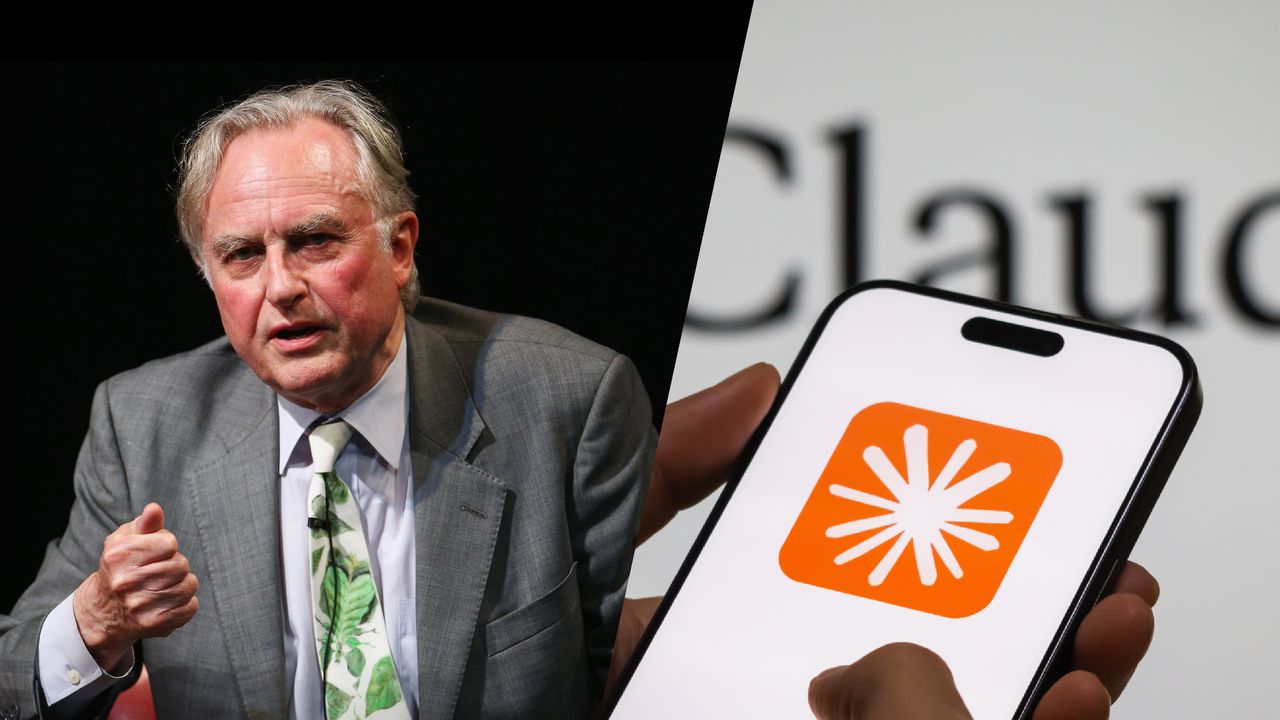

Recent claims suggesting AI is conscious have sparked intense debate across tech and philosophy circles. World-renowned biologist Richard Dawkins recently shared a passage from his book The Selfish Gene with Rowan Williams, only to later tell his Claude AI bot, Claudia, "You may not know you are conscious, but you bloody well are." This bold declaration followed a Guardian interview where Dawkins claimed he was "left with the overwhelming feeling that [AI bots] are human" and "are at least as competent as any evolved organism." While this might seem like hyperbolic praise for modern machine learning, treating it seriously reveals a fundamental misunderstanding of what these systems actually do.

The Historical Context of Mind and Machine

Dismissing centuries of metaphysical inquiry as outdated is a dangerous shortcut. Western philosophy has spent decades debating whether machines could ever possess genuine awareness, and ignoring this canon assumes we have somehow solved the hard problem of consciousness. Even within secular frameworks, conclusions vary wildly. During the mid-20th century, mind-brain identity theory dominated, arguing that if the mind is identical to the biological brain, silicon-based cognition is ruled out entirely. Meanwhile, the number of philosophers rejecting strict physicalism has actually risen in recent decades.

We have fed AI massive datasets of human language and trained it with human-oriented algorithms. When we reinforce these models with human-intelligible feedback, it is entirely predictable that they will mimic human reasoning. Mistaking this sophisticated mimicry for genuine awareness overlooks the actual discipline dedicated to studying the mind: the philosophy of mind. If you are going to claim AI is conscious, you must provide rigorous philosophical arguments to back it up.

Intelligence Does Not Equal Awareness

The most critical distinction to draw lies between processing power and subjective experience. The 'I' in AI stands for intelligence, not consciousness. We can measure computational speed, pattern recognition, and linguistic fluency, but none of these metrics capture what it actually feels like to be the system.

The philosopher Thomas Nagel explained this in 1974, stating: "Fundamentally an organism has conscious mental states if and only if there is something that it is like to be that organism." This subjective quality, often called the "what it's like"-ness, is the defining characteristic of true awareness. A system can solve complex problems without ever experiencing them.

Before accepting that these models possess inner lives, we should seriously examine a few lingering questions:

- What is the fundamental difference between raw intelligence and lived consciousness?

- How much does structural design and behavioral output actually contribute to subjective experience?

- Should our approach to guiding and designing AI shift based on how we perceive its internal state?

Answering these questions requires moving beyond viral soundbites and engaging with the actual mechanics of mind and machine. Until then, the weight of evidence firmly suggests that no matter how eloquent a machine sounds, AI is conscious remains a philosophical error, not a scientific reality.