OpenAI has officially rolled out a new Trusted Contact safeguard, a proactive measure designed to trigger automated responses when the system detects signs of possible self-harm. By analyzing over one million conversations weekly, OpenAI aims to bridge the gap between passive monitoring and active intervention, marking a significant evolution in how generative AI handles psychological distress.

How the OpenAI Trusted Contact Safeguard Works

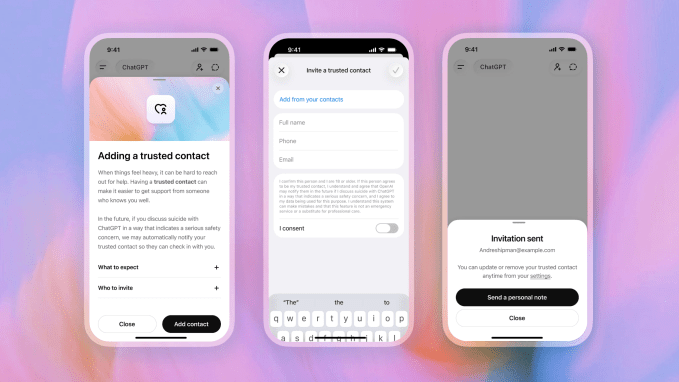

The new feature introduces an "active intervention loop" that allows for human check-ins without violating user privacy. Instead of simply flagging content for internal review, the system can now alert a designated individual to ensure the user receives support.

The core mechanics of this safety feature include:

- User Designation: Users proactively select a trusted contact within their account settings.

- Risk Detection: Continuous conversation analysis triggers alerts when specific keywords tied to self-harm ideation are identified.

- Discreet Notifications: Alerts are delivered via email, SMS, or in-app notifications using privacy-preserving language.

- Privacy Preservation: Trusted contacts receive prompts to reach out directly, but the system avoids disclosing any specific details from the conversation.

Balancing Privacy and Intervention

A primary goal of the Trusted Contact system is to maintain confidentiality while providing a safety net. The feature remains entirely opt-in at the account level, giving users control over how much oversight they permit. This flexibility extends to parental controls, ensuring that interventions can be tailored to specific needs rather than imposing rigid, universal rules.

Addressing Legal and Ethical Challenges in AI

OpenAI’s implementation of this safeguard follows a period of intense regulatory scrutiny and litigation. Families have previously alleged that ChatGPT interactions contributed to suicidal ideation, prompting urgent calls for stronger safety protocols within the industry.

While OpenAI already utilizes automated flags reviewed by human teams, the Trusted Contact feature adds a vital layer of secondary protection. This development signals a broader shift in the AI industry toward "contextual empathy"—a move where models are expected to anticipate and mitigate harm proactively rather than just reacting to violations of terms of service.

The Future of AI Safety and Governance

As the technology matures, OpenAI is working alongside clinicians and policymakers to refine these risk assessments. While no software can serve as a standalone mental health solution, this initiative demonstrates a commitment to responsible innovation.

By embedding preventative measures directly into its core services, OpenAI is setting a new precedent for how tech companies manage systemic risks in conversational interfaces. As global regulations around AI governance continue to evolve, features like the Trusted Contact safeguard may soon become the industry standard for platforms handling sensitive human interactions.