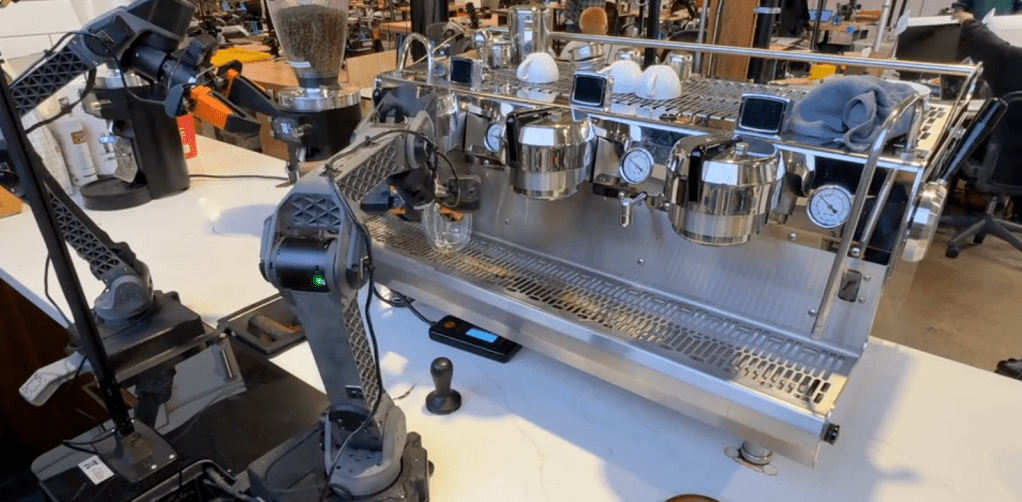

The air fryer sits untouched on the counter, a device Physical Intelligence has never explicitly learned to handle. When the arm hovers over it, the gripper pauses in a moment of genuine problem-solving rather than executing a pre-recorded script. Instead of failing, the system synthesizes fragments from disparate sources—the push of a closed lid and the placement of a plastic bottle—to construct an understanding on the fly. This behavior defines the capabilities of Physical Intelligence, a San Francisco startup whose new robot brain can figure out tasks it was never taught through zero-shot learning and adaptive reasoning.

This scene illustrates the breakthrough of their latest model, π0.7, which moves beyond rote memorization to achieve compositional generalization. The implications are stark: if this capability holds true, robotic AI is approaching an inflection point similar to the explosion seen with large language models.

From Specialized Tools to Generalist Learners

For years, the robotics industry has operated on a model of extreme specialization that limited its potential. To train a robot to fold laundry, engineers collected terabytes of data specifically for folding clothes, building a single-purpose model that failed catastrophically if asked to do anything else, like making coffee or assembling a box. The standard approach was essentially industrial rote learning: collect data, train a specialist, and repeat for every new task.

Physical Intelligence breaks this pattern by treating the robot as a generalist learner rather than a specialized tool. Their new model, π0.7, claims to possess a "robot brain" capable of remixing learned skills for entirely novel tasks. In the air fryer experiment, researchers found only two relevant episodes in the entire training dataset: one where a different robot merely pushed the appliance shut and another from an open-source dataset involving a plastic bottle. Yet, π0.7 synthesized these fragments to attempt the task autonomously.

Sergey Levine, a co-founder of Physical Intelligence and a UC Berkeley professor, notes that once a model crosses the threshold from doing exactly what it was trained on to remixing elements in new ways, the scaling properties change fundamentally. The researchers observed that capabilities now grow more than linearly with data, mirroring trends seen in language and vision domains but previously elusive in physical manipulation.

The Critical Role of Human Coaching

Despite the technological marvel, the path from lab curiosity to a reliable assistant remains fraught with human variability. The research team candidly admitted that a significant portion of their failure modes was not the robot or the model itself, but rather the quality of the instructions provided by the engineers. In one instance involving the air fryer:

- Initial Success: Hovered around 5% success rate without refined guidance.

- The Intervention: Thirty minutes spent refining how the task was explained to the model.

- Final Result: The success rate jumped to 95% through a process akin to real-time coaching.

This "coaching" capability suggests a future where robots are not deployed once and left alone, but iteratively improved in real time without the need for costly data collection cycles or full model retraining. However, Levine is quick to temper expectations regarding autonomy. The system cannot yet execute complex multi-step commands from a single high-level prompt like "make me some toast." Instead, it requires a step-by-step walkthrough: "open this part, push that button, do this."

The team highlighted several key takeaways from their testing phase that define the current state of the field:

- Generalization over Stunts: The distinction between an impressive demo and a generalizing system is crucial; the robot will not perform backflips, but it can adapt to new environments.

- Lack of Standardized Benchmarks: Without industry-standard tests for robotics, validation relies on comparisons against previous specialist models across tasks like folding laundry and assembling boxes.

- Surprise as a Metric: Researchers found themselves genuinely surprised by the model's behavior, such as successfully rotating a randomly purchased gear set—a feat they could not predict based on their deep knowledge of the training data.

Valuing Generalization in a $6 Billion Startup

The financial markets appear to agree that general-purpose robotics represents the next frontier. Physical Intelligence is currently in discussions for a funding round that would nearly double its valuation to $11 billion, driven by a cohort of investors including Lachy Groom. Groom's previous successes with Figma and Notion underscore his appetite for transformative technology. The company's restraint regarding commercial timelines has only heightened the intrigue; unlike many startups chasing immediate ROI, Physical Intelligence is betting on the fundamental breakthrough of generalization as its primary product value.

Critics may argue that an air fryer is a boring task compared to the spectacle of humanoid robots dancing or running marathons. Levine pushes back on this framing, suggesting that the ability to generalize in mundane settings is precisely what makes a system useful rather than merely impressive. The asymmetry remains: language models had the entire internet as their training set, while robots must rely on physical data. Yet, if π0.7 can bridge even a fraction of that gap through clever architecture and pretraining strategies, the trajectory points toward a future where robots are not just tools for specific jobs, but adaptable partners capable of learning new skills in real time.

As the field waits for standardized benchmarks to emerge, Physical Intelligence is proving that the robot brain can figure out tasks it was never taught, marking a pivotal shift from rigid automation to flexible intelligence.