A developer sits before a dual-monitor setup, fingers poised over a keyboard, as an AI chatbot offers a quick fix for a stubborn configuration script. Within minutes, the problem is resolved, but the solution lacks depth. More importantly, the developer’s ability to troubleshoot similar issues hasn't improved.

This scenario highlights a growing concern in the tech industry: using AI for just 10 minutes might make you lazy and dumb. Recent research suggests that even brief reliance on AI systems can erode fundamental reasoning skills, reduce persistence, and weaken long-term learning capacities.

The Cognitive Consequences of Minimal AI Assistance

A collaborative study involving researchers from Carnegie Mellon, MIT, Oxford, and UCLA has provided experimental evidence regarding the hidden costs of automation. Through three controlled experiments involving hundreds of participants, the study tracked how individuals handled tasks like reading comprehension and simple fraction calculations.

The findings were startling. When a subset of users was given access to an AI assistant, they performed well initially. However, when that assistance was abruptly removed, those users were significantly more likely to abandon challenges or deliver incorrect responses. The data indicates that temporary aid creates a dependency loop, diminishing both intrinsic motivation and problem-solving stamina.

How AI Dependency Impacts Learning and Work

The study underscores a critical tension between immediate productivity and long-term skill development. While AI can amplify short-term output, it may also foster an environment where foundational competencies atrophy.

To prevent the decline of cognitive skills, researchers suggest that we must rethink how we interact with these tools:

- Masking Knowledge Gaps: Immediate assistance can hide errors and prevent users from correcting their own misunderstandings.

- Reducing Persistence: Overreliance risks reducing a person's willingness to persist through difficult tasks.

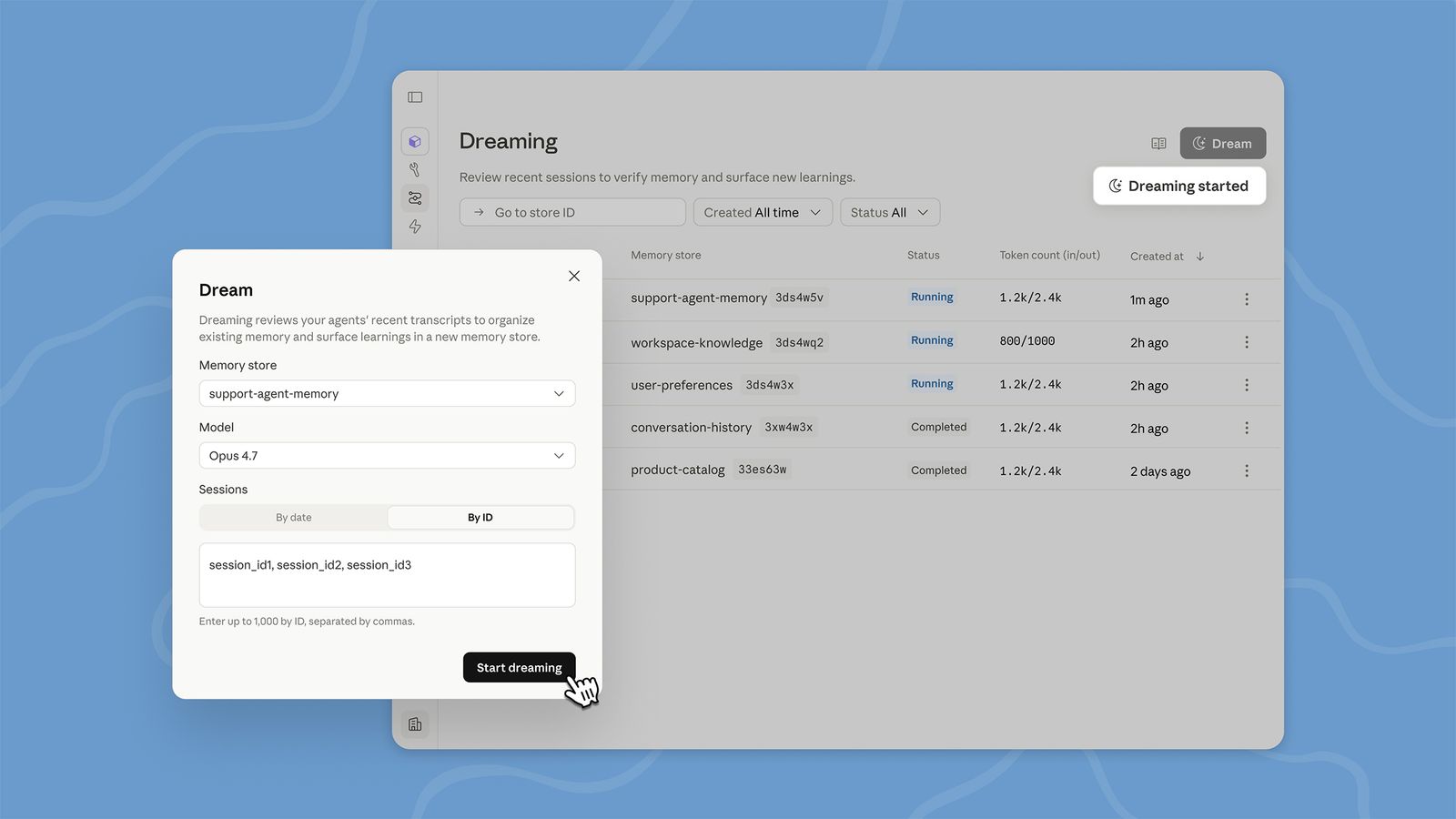

- The Need for Scaffolding: AI models should be designed to coach or challenge users, rather than simply delivering final answers.

Instead of treating AI as a passive tool that provides shortcuts, it should act more like an effective human teacher—balancing assistance with encouragement to foster resilience.

Strategies for Responsible AI Integration

Experts advocate for the careful calibration of when and how AI intervenes in our workflows. To ensure that technology enhances rather than replaces human effort, organizations and developers should prioritize cognitive empowerment.

Effective integration could include:

- Transparent Design: Clearly signaling when an AI is offering a helpful hint versus a complete solution.

- Interactive Prompting: Building interfaces that require users to articulate their reasoning before receiving help.

- Step-by-Step Guidance: Implementing guardrails like optional explanations and reflection prompts.

Maintaining Human Competence in the Age of Automation

Offloading critical thinking to an algorithm, even briefly, can reshape mental habits in subtle yet significant ways. To avoid the trap of becoming "lazy and dumb," users should adopt a balanced strategy: use AI for orientation and verification, but reserve deep analysis for human judgment.

To maintain your edge, consider these practical steps:

- Schedule independent problem-solving: Set aside regular periods where you work without any digital assistance.

- Seek explanatory models: Use AI tools that explain their reasoning to reinforce your own understanding.

- Reflect on outcomes: Periodically evaluate how much you are deferring to algorithmic shortcuts and adjust your reliance accordingly.