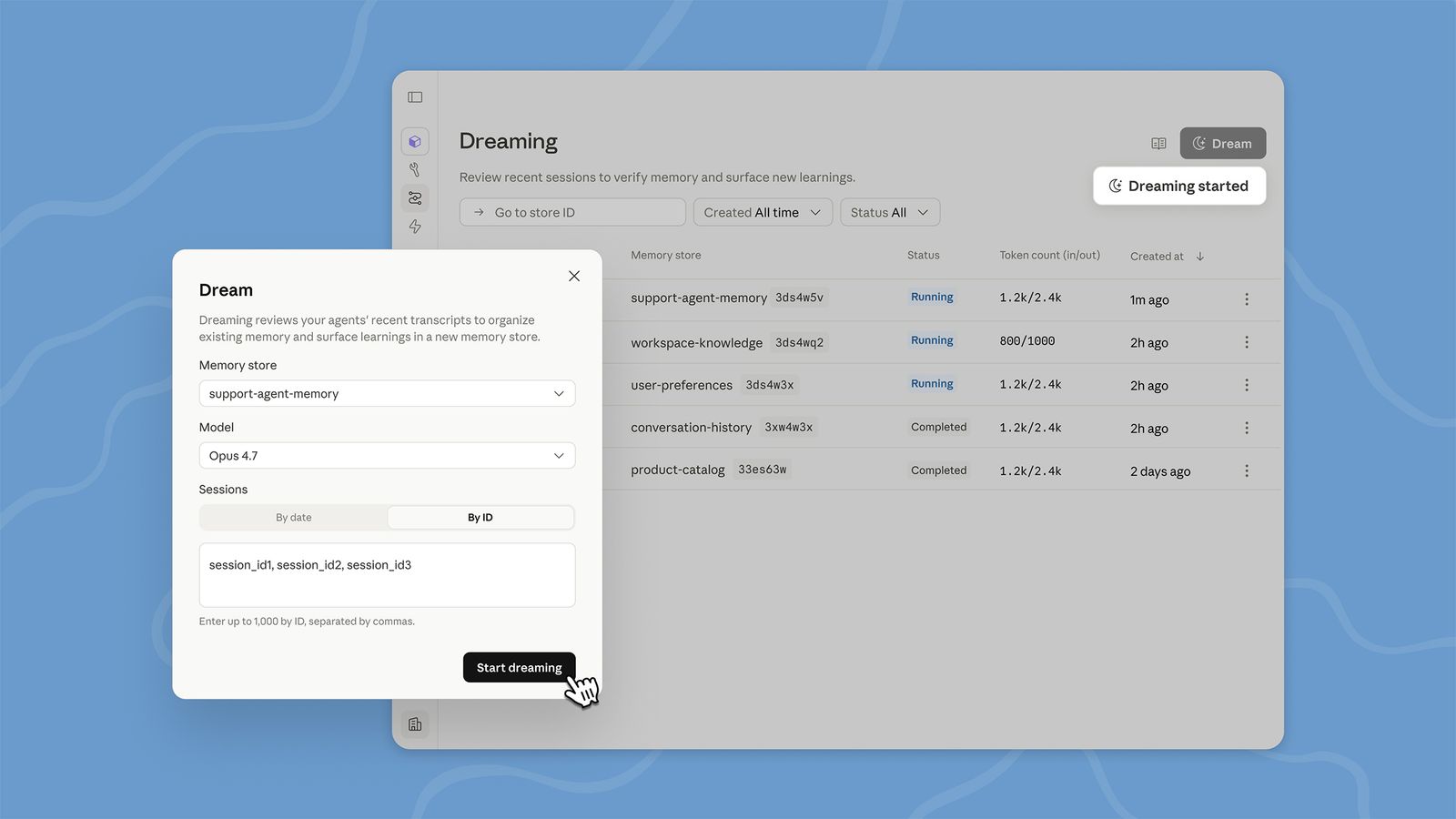

Naming conventions shape how we perceive technology, and when tech companies label machine capabilities after human cognition, they blur the critical lines between a tool and a thinker. The recent rollout of Anthropic’s “Dreaming” feature exemplifies a broader trend in the industry that warrants an immediate correction.

As generative models become more integrated into our daily lives, the way we describe them matters. Using anthropomorphic branding suggests a level of internal experience that simply does not exist within the code.

The Problem with Anthropomorphic Branding

When AI companies use terms like “dreaming,” “memorable,” or “personality,” they create several significant issues for users and developers alike:

- Misleading Implications: Terms suggesting subjective states imply an internal experience, despite AI lacking any form of sentience.

- Ethical Risks: Over-attributing human traits can skew legal and moral responsibility when systems fail or exhibit bias.

- Skewed User Expectations: Users may over-rely on systems they believe understand context intuitively, leading to dangerous misuse or unsafe automation.

This trend isn't just about marketing flair; it’s about the fundamental way we interpret machine learning capabilities. By using human-centric language, companies mask the technical reality of what these models are actually doing.

Historical Patterns and Current Missteps

We are seeing a consistent pattern of anthropomorphism in modern AI marketing. OpenAI’s “thinking time,” Anthropic’s “memories,” and countless startups claiming their chatbots have “personalities” all follow this problematic blueprint. This language treats code-driven tools as if they possess lived awareness, echoing early computing eras where metaphorical branding often overshadowed mechanical reality.

To maintain a healthy relationship with technology, we must distinguish between two core concepts:

- Philosophical Caution: We must avoid conflating simulation with sentience; storytelling in marketing does not equate to actual awareness.

- Technical Reality: Current models lack consciousness, emotions, or introspection. They are designed to process patterns and optimize outputs based on statistical learning.

The Consequences of Misnaming AI Features

Misnaming these features fosters misplaced trust, leading users to assume accountability where none exists. Research in AI ethics has already linked anthropomorphism to distorted risk assessment and flawed policy decisions. Furthermore, media coverage often amplifies this confusion, framing software instruments as "partners" rather than tools.

As the industry grows, clearer terminology is required to maintain appropriate boundaries between human agency and machine action. This clarity is essential for regulatory pressure, as oversight bodies are increasingly focused on how language influences accountability frameworks and legal responsibility.

Toward Responsible Naming Practices

To foster transparency, tech leadership should adopt neutral, descriptive terms tied directly to function rather than human analogues. We need to move away from "clever" branding and toward functional accuracy.

Instead of using vague metaphors, companies should implement the following shifts:

- Replace “Dreaming” with descriptive terms like “post-session analysis” or “cross-session learning.”

- Replace “Memories” with technical labels such as “activity logs” or “session records.”

- Prioritize Documentation Clarity: Use precise function names in APIs, SDKs, and developer guides.

- Ensure Policy Alignment: Corporate guidelines must reflect the current technical understanding to avoid misrepresentation.

The momentum behind AI innovation demands careful stewardship of language as much as code. By stopping the habit of borrowing human processes for feature names, companies can protect user trust, mitigate systemic risk, and promote realistic expectations. A culture that values technical clarity over marketing cleverness will ultimately strengthen public confidence and support sustainable progress.