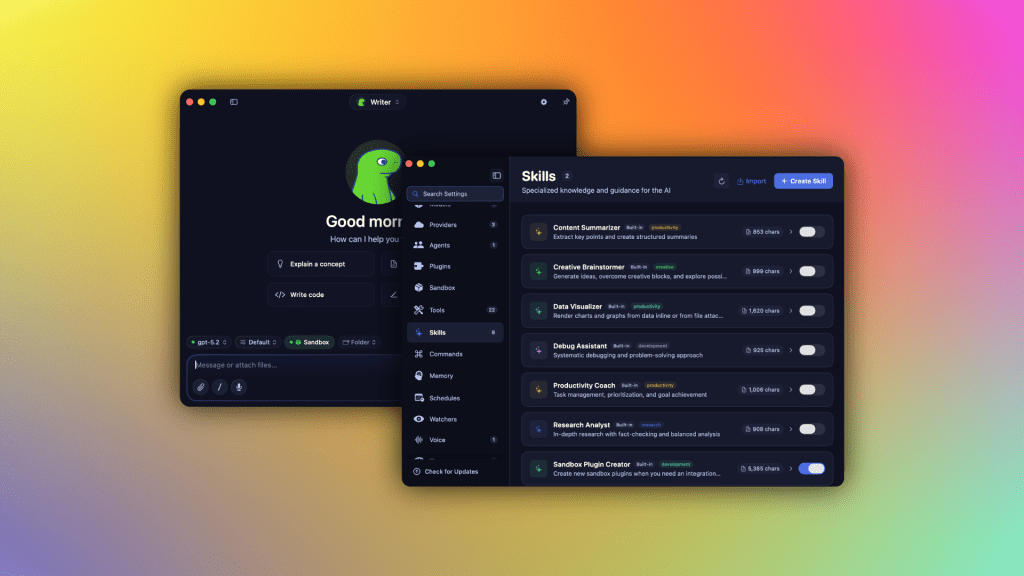

The first time a user opens Osaurus on their Mac, they experience an instantaneous shift from passive interaction to active collaboration with an AI assistant that respects their data and adapts to their workflow. Running locally and connecting seamlessly to cloud endpoints, this platform transforms the machine into a responsive, privacy-focused companion capable of handling complex tasks without compromising security.

The Flexibility of Model Deployment

- Local AI execution: Users can deploy models such as Gemma 4, Llama, or DeepSeek V4 directly on macOS, leveraging Apple hardware for faster inference and reduced latency.

- Cloud integration: When local capacity isn't sufficient, Osaurus routes requests to trusted providers like OpenAI, Anthropic, or Grok through secure, encrypted channels.

- Hybrid operation: The system intelligently balances workloads, shifting between local and cloud resources based on context, user preferences, and network conditions.

Security and Isolation

By design, Osaurus isolates AI processes within a hardware-isolated sandbox. This approach mitigates exposure to external attacks, reduces the risk of data leakage, and ensures that sensitive information stays on-device unless explicitly shared. Unlike terminal-heavy developer tools, it offers an intuitive interface suitable for everyday users while maintaining robust protection mechanisms.

Model Ecosystem and Extensibility

- Supports a broad spectrum of open-source and proprietary models including MiniMax M2.5, Qwen3.6, and Liquid AI’s LFM.

- Plug-and-play plugins expand functionality across macOS applications—Mail, Vision, Browser, Git, and more—making the AI an integral part of daily tasks.

- The Model Context Protocol (MCP) server architecture enables third-party clients to access tools and data securely, fostering a vibrant ecosystem of integrations.

Hardware Considerations

Local deployment demands significant resources. The software recommends at least 64 GB of RAM for modest models and 128 GB or more for larger ones like DeepSeek V4. Future improvements in efficiency may reduce these thresholds, but current implementations remain constrained by silicon capacity and thermal limits.

Evolution and Adoption

Within a year of its public release, Osaurus has surpassed 112,000 downloads, reflecting growing interest among power users seeking control over their AI experience. Its founders, including Terence Pae formerly of Tesla and Netflix, emphasize usability alongside privacy, positioning the tool as both a productivity enhancer and a foundation for enterprise adoption in regulated sectors such as healthcare or legal services.

Future Outlook

As local AI models improve in intelligence per watt and cloud providers face rising infrastructure costs, the balance of power may shift toward decentralized architectures. Osaurus exemplifies how thoughtful engineering can democratize access to advanced capabilities without sacrificing safety or autonomy, making it a pivotal development in the next phase of artificial intelligence consumption.