Runway began with a singular, visual mission: to empower filmmakers with tools that could translate imagination into image. But the company’s trajectory has shifted dramatically. What started as a solution for editing suites has evolved into a broader challenge to tech giants, specifically aiming to surpass Google in the realm of artificial intelligence.

The race is no longer just about generating pretty videos; it is about proving that video-based models can offer a deeper, more accurate simulation of reality than text-based systems.

From Visual Training to $5.3 Billion Valuation

Runway’s origins lie in a radical departure from standard AI training methods. Emerging from NYU Tisch in 2018, the company’s founders believed that true intelligence required visceral data rather than just language processing.

While competitors parsed sentences from internet forums, Runway’s early models learned by watching raw footage. They analyzed sunsets over ocean waves and the flicker of a chef’s knife on a cutting board. This approach allowed the AI to understand context, motion, and consequence without relying on textual descriptions.

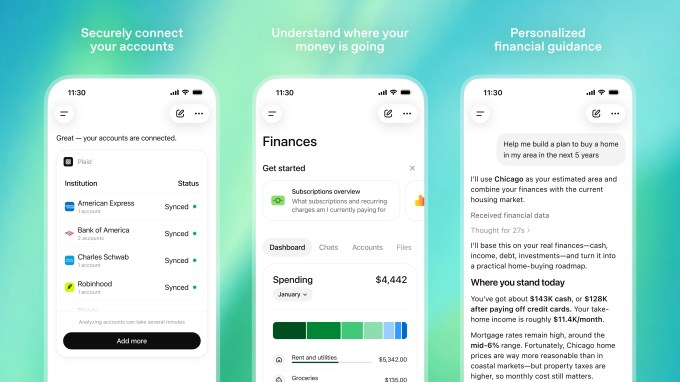

This foundational shift attracted significant attention and capital:

- Industry Validation: Partnerships with major studios like Lionsgate and AMC established Runway’s tools as essential production assets.

- Massive Valuation: The company reached a $5.3 billion valuation as its capabilities expanded beyond simple editing.

- Technical Superiority: By focusing on unstructured visual inputs, Runway aimed to internalize the laws of physics—such as how light bends or fluids flow—directly from observation.

The Compute Wars and Robotics Pivot

As Runway scaled, it faced the same bottleneck as all AI developers: access to sufficient compute power. To train models capable of simulating environments indistinguishable from reality, the company secured critical partnerships with Nvidia and CoreWeave.

These deals provided the GPU access necessary to train massive models, but the application of this technology has expanded unexpectedly. Runway’s engineers argued that video-based models could understand physical laws better than text-based ones. This led to a strategic pivot:

- Robotics Integration: A dedicated unit launched last year to contribute to autonomous navigation trials.

- Controlled Environments: These models are now being tested in controlled warehouse settings for autonomous systems.

- Physical Simulation: Unlike text models, Runway’s AI can predict outcomes in physical domains by observing motion and consequence.

The Google Challenge: Genie vs. Runway

The most significant competitor in this new frontier is Alphabet’s Genie model. Google has promised "world models" that can predict outcomes across physical domains, challenging Runway’s claim to fidelity.

However, Runway’s team insists that video intelligence offers a higher degree of accuracy for complex simulations. For example, simulating climate patterns requires more than textual forecasts; it demands modeling atmospheric layers, ocean currents, and human infrastructure interactions simultaneously.

The differences in strategy are stark:

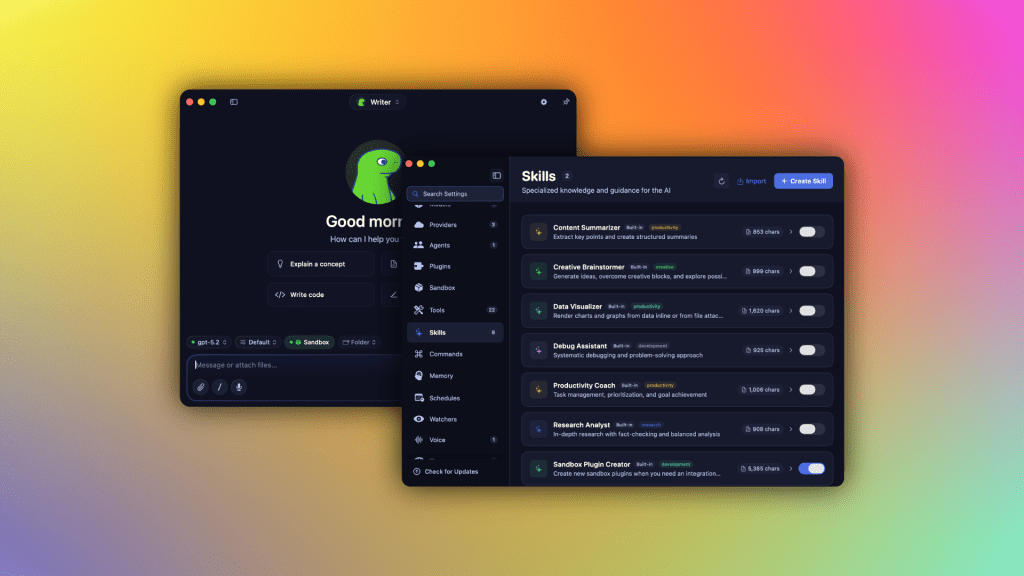

- Data Focus: While OpenAI’s Sora focuses on scripted dialogue and text, Runway indexes billions of visual hours into a living encyclopedia of motion.

- Resource Allocation: Securing cluster time at major cloud providers remains a bottleneck, despite Runway’s ties to CoreWeave.

- Cultural Approach: Free from Silicon Valley orthodoxy, Runway embraces iterative failures that slower-moving incumbents like Google might avoid.

Ethical Guardrails and Future Implications

Runway’s founders view their mission as building scientific infrastructure. They aim to create a digital twin of Earth capable of answering "what-if" scenarios faster than traditional labs can test hypotheses. This could accelerate timelines in drug discovery and climate simulation.

However, this power comes with significant responsibility. The company is actively debating how to encode ethical guardrails into models that can generate plausible but dangerous outcomes.

- Transparency: Runway maintains that transparency about training data sources is nonnegotiable, even as competitors guard proprietary datasets.

- Public Trust: As models become more realistic, maintaining public trust requires clear boundaries on usage.

- Economic Impact: By lowering the barrier to entry, Runway is helping smaller studios leverage AI, leveling the creative playing field globally.

Conclusion

The narrative around Runway has shifted from being a filmmaker’s tool to a central player in the race against Google and other tech giants. The goal is no longer just to beat them at video generation, but to prove that video can be a form of reasoning.

If Runway’s models achieve true world simulation, industries from pharmaceuticals to aerospace may pivot overnight. The founders still dream of turning everyone into filmmakers, but they now see themselves as architects of intelligence itself. Whether that vision outlasts market cycles depends on compute availability, curiosity, and the belief that seeing is indeed believing.