Roblox’s AI Assistant Gets New Agentic Tools to Plan, Build, and Test Games

Roblox's AI assistant gets new agentic tools to plan, build, and test games, marking a pivotal moment where a platform built on user-generated creativity attempts to automate the very imagination it relies upon. This evolution fundamentally shifts how the ecosystem operates by unveiling capabilities that transform Roblox Assistant from a simple plain-language tool into a collaborative partner. Now capable of planning, constructing, and stress-testing game mechanics before assets are finalized, the AI engages in complex, multi-step workflows where it analyzes code and interrogates developer intent.

This move represents a strategic pivot from basic prompt-response interactions to a sophisticated system featuring a self-correcting loop of creation. By automating these critical early stages, Roblox's AI assistant gets new agentic tools that allow creators to bridge the gap between abstract concepts and concrete implementation with unprecedented speed.

From Prompt to Plan: The Rise of Agentic Development

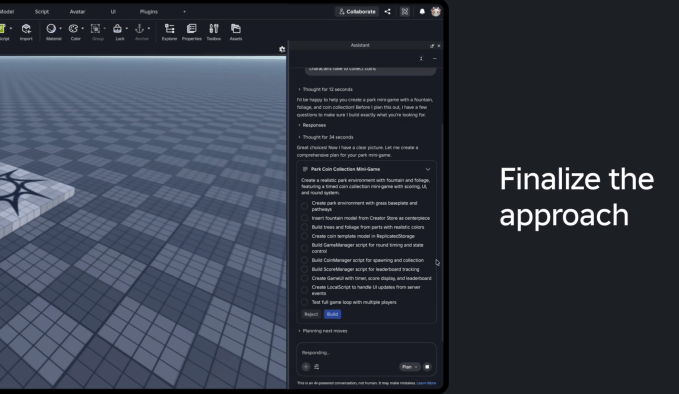

The core friction in current generative AI applications for game design is the "one-shot" failure mode; asking an AI to build a complex world from a single sentence often yields results that miss the creator's specific nuance or structural requirements. Roblox addresses this by introducing Planning Mode, a feature that reframes the assistant not as a magic wand, but as a senior developer who pauses for clarification before coding begins.

When a creator inputs a request such as "create a park mini-game with a fountain," the system does not immediately render assets. Instead, it initiates a dialogue to refine the vision through iterative questioning:

- Visual Fidelity: The Assistant asks whether the desired look is cartoony or realistic.

- Production Pipeline: It queries how assets like foliage and furniture should be sourced—built from scratch, pulled from the Creator Store, or synthesized procedurally.

This phase allows developers to inject critical context into the plan, ensuring that the resulting execution aligns with their original intent. The output is not a finished game, but an editable action plan that creators can tweak, adding layers of detail before any code is written or geometry generated. Once finalized, this plan becomes the blueprint for the subsequent construction phase.

Mesh Generation and Procedural Intelligence

With the planning phase solidified, the new agentic tools deploy Mesh Generation and Procedural Model Generation to accelerate the physical creation of game worlds. Historically, developers relied on low-fidelity placeholders—simple cubes or spheres—to test mechanics during early prototyping, a process that could obscure how players would interact with polished assets later.

- Mesh Generation: This feature enables creators to request fully textured 3D objects directly within the studio. A developer can ask for a campfire and instruct the AI to add dynamic lighting effects set to nighttime, instantly replacing placeholder geometry with production-ready visuals.

- Procedural Models: The AI leverages its understanding of spatial relationships to generate editable 3D structures defined by code. Creators can command the generation of a bookcase with a specific number of shelves or a staircase with adjustable height, creating reusable building blocks that retain full editability.

These tools do not merely generate static files; they create dynamic systems where attributes like scale and density can be adjusted via prompt. This capability turns the AI into a tool for rapid iteration, allowing designers to explore variations in their world's architecture without manually rebuilding assets from scratch.

The Self-Correcting Loop of Automated Testing

Perhaps the most significant shift is the integration of automated testing into the development lifecycle through agentic loops. Roblox Assistant now functions as an autonomous tester, capable of reading output logs, capturing screenshots, and simulating user inputs via keyboard and mouse to validate gameplay design. The system operates on a feedback mechanism where it identifies bugs or design flaws during playtesting, reports them back to the assistant, which then proposes fixes and incorporates those solutions into the next iteration.

This creates a self-correcting system that theoretically becomes more accurate over time as it processes more data about what constitutes a robust and bug-free experience. Looking ahead, Roblox is working toward enabling multiple AI agents to work in parallel, handling distinct tasks like character creation, level design, and code optimization simultaneously.

The company also aims to ensure seamless interoperability with third-party tools like Claude, Cursor, and Codex within the Roblox Studio environment. Nick Tornow, Senior Vice President of Engineering, describes this as reducing the barriers between creative vision and execution, allowing creators to move from concept to playable reality at a fraction of the traditional time.

Shifting Roles in Game Creation

The implication is profound: the role of the game developer on Roblox may soon shift from writing code line-by-line to curating high-level directives and managing an AI workforce that handles the granular mechanics of world-building. While this promises to democratize game creation for those without deep technical skills, it also raises questions about the homogenization of design if the underlying logic becomes too uniform.

For now, however, Roblox is betting that the agentic model will empower a new generation of creators to realize complex visions without getting bogged down in the minutiae of implementation. By utilizing these advanced tools, developers can focus on the "what" and "why" while the AI handles the heavy lifting of the "how."