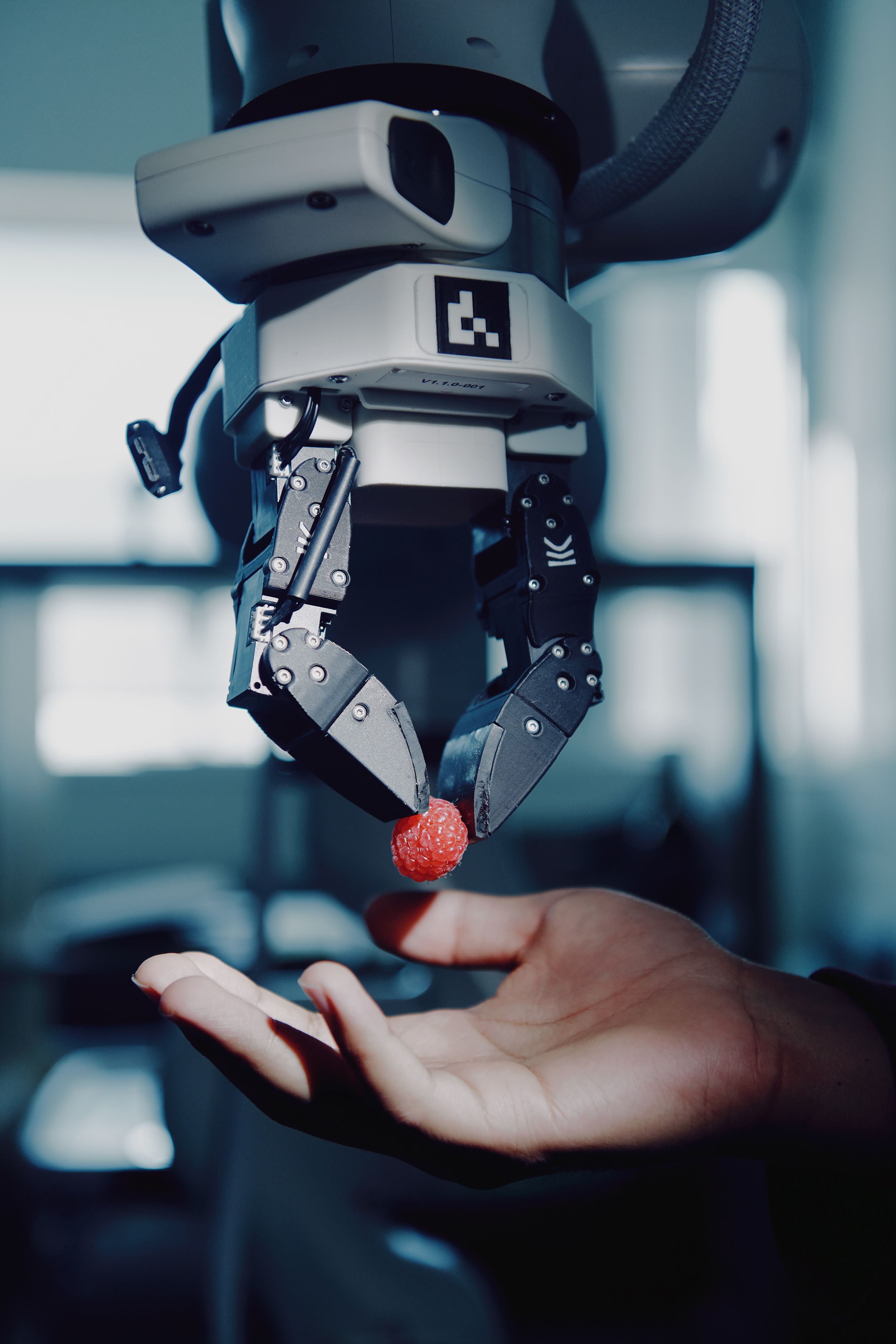

A humanoid robot enters a dimly lit workshop, its sensors scanning a chaotic landscape of scattered tools, oily rags, and heavy machinery. To navigate this environment safely, the machine cannot afford the millisecond-long delay required to translate every visual frame into a linguistic description before deciding on a path. It needs to see, understand, and act instantaneously, processing pixels directly into logic without the bottleneck of text-based translation.

The recent announcement that the sanctioned Chinese AI firm SenseTime releases image model built for speed marks a pivotal shift in how machines perceive reality. Released this week, the SenseNova U1 is an open-source model designed to bridge the gap between digital intelligence and physical interaction. While much of the global conversation around generative AI focuses on the linguistic prowess of models like GPT-4, SenseTime is prioritizing raw processing speed and architectural efficiency.

The Tech Behind Why SenseTime Releases an Image Model Built for Speed

The core innovation within SenseNova U1 lies in its ability to bypass the traditional pipeline of converting images into text descriptions before performing reasoning tasks. Most contemporary multimodal models rely on a "bridge" where visual input is described in natural language, which is then fed into a Large Language Model (LLM). While effective for complex reasoning, this method introduces significant latency and consumes unnecessary computational overhead.

SenseTime has addressed this through a new technical architecture known as NEO-Unify. This structure allows the model to "read" and interpret images natively, treating visual data as a primary source of truth rather than a secondary input to be translated. According to Dahua Lin, cofounder and chief scientist at SenseTime, this direct approach is critical for the next generation of autonomous systems.

The implementation of this SenseTime image model built for speed offers several key advantages:

- Reduced Latency: By eliminating the text-translation step, the model can generate responses and interpret visual changes in near real-time.

- Hardware Optimization: The streamlined process requires significantly less computing power, making it viable for edge computing.

- Robotic Integration: Faster visual processing allows humanoid robots to navigate complex, cluttered environments without hesitation.

- Edge Deployment: The model's compact footprint enables it to run effectively on consumer-grade hardware, including PCs and smartphones.

Navigating the Hardware Divide and Geopolitical Tension

The release of SenseNova U1 arrives during a period of intense geopolitical friction in the semiconductor industry. As US export controls tighten—restricting Chinese firms from accessing high-end Western hardware like Nvidia’s most advanced training chips—SenseTime is forced to innovate within a more constrained ecosystem. This has necessitated a strategic shift toward optimizing software for domestic Chinese silicon.

On the day of the release, several prominent Chinese chip designers, including Cambricon and Biren Technology, announced that their hardware is already compatible with the new model. This synergy suggests a growing attempt to build a self-sustaining AI ecosystem that functions independently of Western supply chains. While SenseTime acknowledges the necessity of high-end chips for rapid iteration, the focus on domestic compatibility provides a critical fallback against escalating trade restrictions.

Reclaiming Market Share Through Open Source

This move toward open source also serves as a strategic maneuver to regain market share. After the initial surge of the ChatGPT era, SenseTime found itself trailing behind newer, more agile competitors like DeepSeek and MiniMax.

By releasing SenseNova U1 for free on platforms like Hugging Face and GitHub, the company is leveraging the global research community to accelerate its development cycle. This continuous, external feedback loop is essential as the company continues to refine its technology.

The Future of Physical AI

Beyond simple image generation, the long-term goal for SenseTime involves integrating these visual capabilities into the burgeoning humanoid robot market. While the company does not manufacture hardware itself, its collaboration with startups like ACE Robotics highlights a clear roadmap toward "Physical AI."

This evolution involves training models in geospatial understanding and complex environmental simulations to prepare them for the unpredictability of the real world. The industry is currently witnessing a divergence: Western giants continue to push massive-scale, closed-source LLMs, while firms like SenseTime focus on specialized, hardware-agnostic models designed for the physical realm. Whether this localized approach can challenge the cognitive depth of larger models remains to be seen, but the arrival of this model ensures the race for real-time perception is well underway.