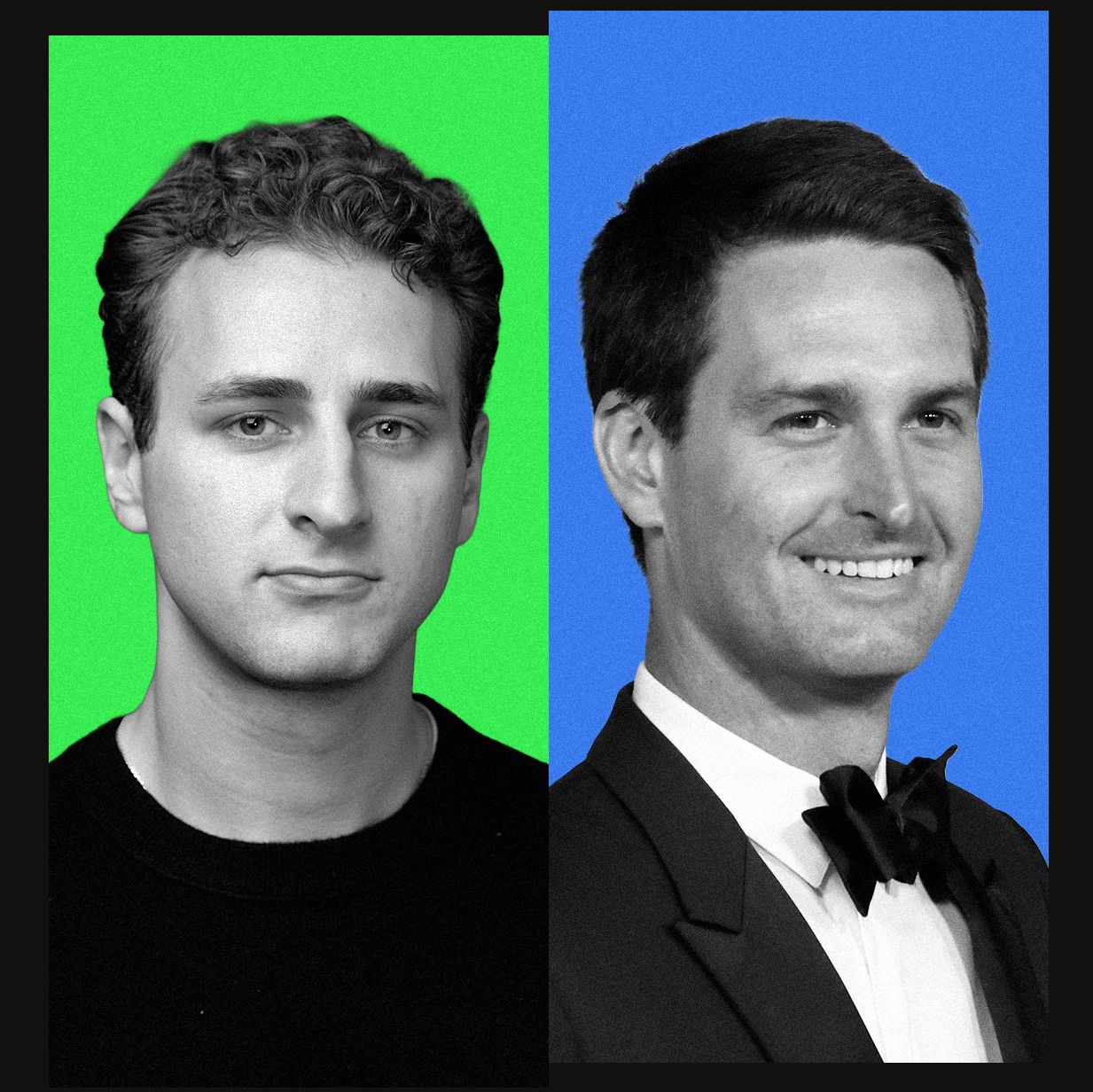

Digital identity is incredibly fragile when subject to the whims of a single, motivated Wikipedia editor. For one week in April, the public-facing visage of Snap Inc. CEO Evan Spiegel was replaced by that of a technology reporter. This incident leaves many asking, "Why does Wikipedia think I’m Evan Spiegel?" It was not a technical glitch or an algorithmic error; it was a deliberate, manual alteration of one of the internet's most trusted information repositories.

The Mechanics of a Manual Override

The disruption began when users visiting the Snapchat founder's page encountered a completely different face. An editor using the pseudonym "Artem G" replaced the official portrait with a photograph of reporter Maxwell Zeff, taken at a TechCrunch conference.

Despite immediate attempts by other contributors to correct the error and restore the rightful image, the page's revision history reveals a persistent tug-of-war. There was a clear struggle between those attempting to maintain factual accuracy and an editor determined to keep the "new" photo in place.

Why Does Wikipedia Think I’m Evan Spiegel? The Ripple Effect

The impact of this single edit rippled far beyond Wikipedia's internal infrastructure. Because modern information ecosystems rely heavily on structured data from authoritative sources, the misinformation propagated through several major platforms:

- Google Search results for Evan Spiegel began displaying Zeff's image as a primary result.

- Google Gemini and other large language models (LLMs), which ingest Wikipedia data for training, struggled to differentiate between the two individuals.

- Social media users and tech colleagues quickly identified the discrepancy, leading to a period of widespread digital confusion.

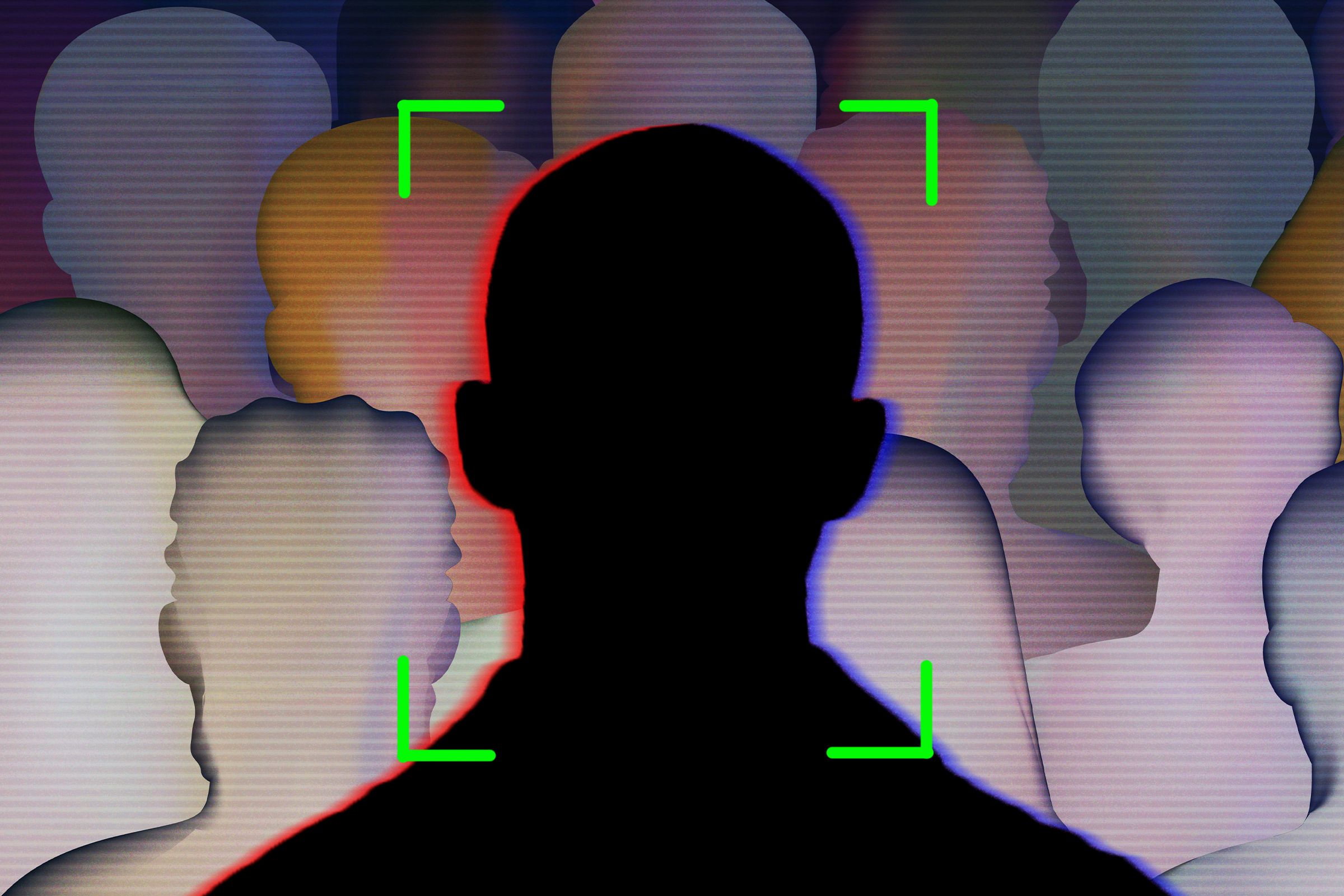

The Vulnerability of Automated Knowledge

The difficulty in policing such edits highlights a growing problem in the age of algorithmic search. When an editor like "Artem G" makes a change—even one that is demonstrably false—the speed at which that error is ingested by automated systems can outpace human correction.

This creates a dangerous feedback loop where AI-driven tools begin to treat erroneous data as established fact, further cementing the falsehood in the digital record. This cycle explains why does Wikipedia think I’m Evan Spiegel? so effectively: the machine learns the lie before the human can fix it.

The process of verifying information on Wikipedia often descends into the "Talk Page" arena, where editors engage in disputes to settle changes. In this instance, the editor's refusal to acknowledge the error—insisting that a "new photo is better"—demonstrates how easily personal preference can masquerade as factual updating.

A Verdict on Digital Veracity

This incident serves as a stark reminder of the instability of the "single source of truth" in an era of decentralized information. While Wikipedia is a monumental achievement in human collaboration, it remains susceptible to individuals who can manipulate public perception with minimal resistance.

The fact that Spiegel himself reacted with humor rather than litigation suggests a level of nonchalance that belies the systemic risk involved. As we integrate more AI-driven search and automated knowledge retrieval into our daily lives, the ability of a single person to hijack a digital identity becomes a much larger threat.

Moving forward, the industry must grapple with how to build more resilient layers of verification. Relying solely on the "wisdom of the crowd" is no longer sufficient when that crowd can be easily manipulated by a single, determined actor. The future of reliable information depends on robust, cross-referenced validation protocols that protect our shared digital reality.