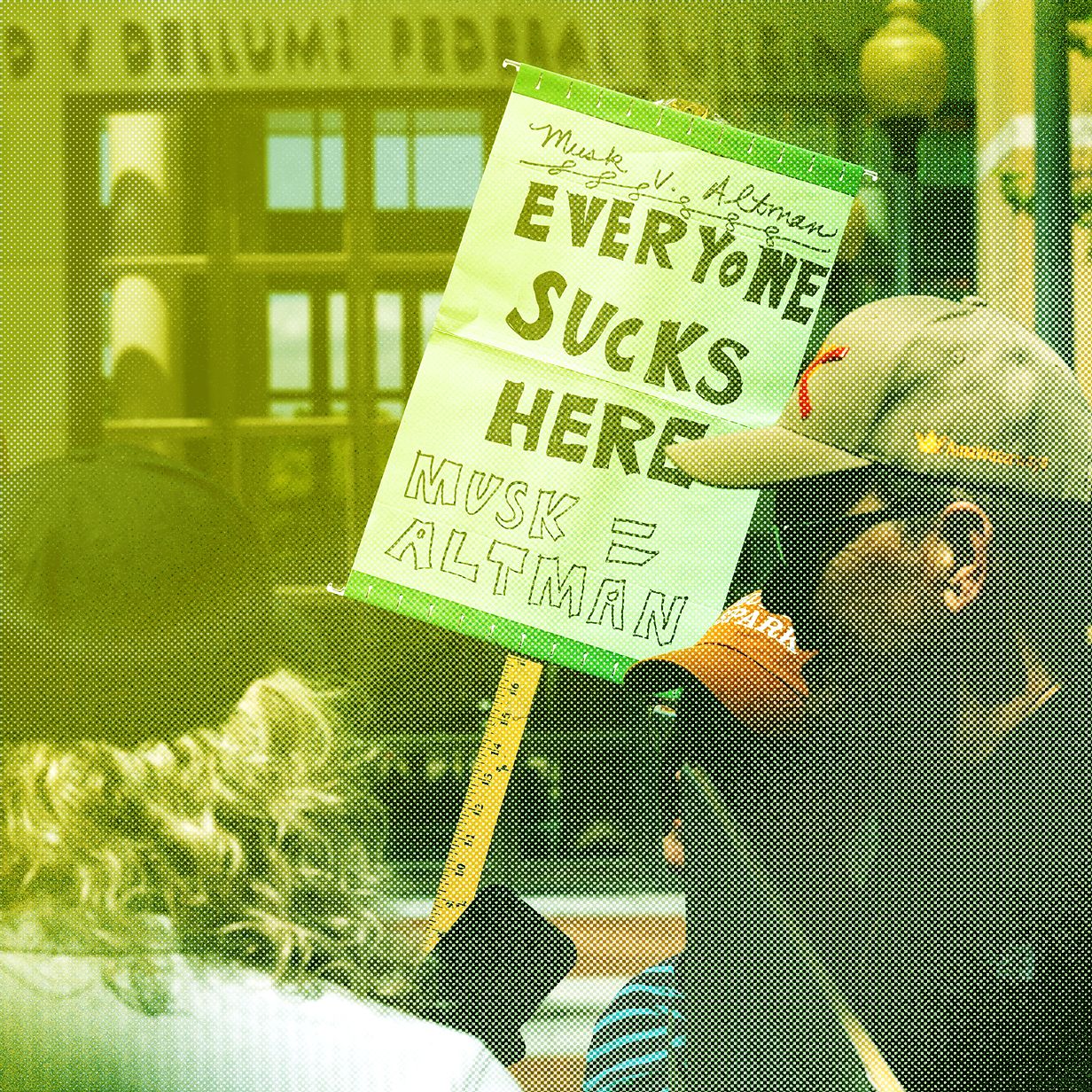

Musk v. Altman Kicks Off: A Battle for OpenAI’s Future

Can a legal dispute between two of the world's most influential billionaires fundamentally rewrite the rules governing artificial intelligence development? As Musk v. Altman kicks off in Oakland, California, the courtroom drama serves as much more than a personal feud. It represents a high-stakes interrogation of OpenAI’s corporate structure and its adherence to its original founding mission.

The trial marks a critical junction for the AI industry. It examines whether the transition from a non-scale non-profit to a profit-driven entity constitutes a breach of trust. Elon Musk alleges that he was misled into contributing millions of dollars under the impression that he was supporting a philanthropic endeavor.

The core of the dispute lies in the company's pivot toward a for-profit arm. While this move is designed to attract the massive capital necessary for large-scale model training, it directly contradicts OpenAI's initial charter.

OpenAI has countered these claims by suggesting the litigation is a strategic maneuver by Musk to damage a competitor. Following the launch of his own venture, xAI, the timing of the lawsuit has drawn significant scrutiny from industry observers. If the court finds in favor of Musk, the consequences could be catastrophic for OpenAI's leadership, potentially forcing the removal of executives like Sam Altman and Greg Brockman.

The DOJ Gutting the Voting Rights Unit

While the tech industry grapples with corporate governance, the federal landscape is undergoing a significant transformation. Recent investigations into the Department of Justice (DOJ) suggest that its ability to protect democratic processes has been compromised.

The effective hollowing out of the DOJ's voting rights unit through the removal of dozens of experienced lawyers represents a profound shift in federal oversight. This depletion of legal expertise poses a direct threat to the enforcement of the Voting Rights Act.

Without a robust team of litigators, the government's capacity to challenge discriminatory practices or systemic disenfranchisement is severely diminished. The loss of these specialists is a structural weakening of the institutions tasked with ensuring election integrity during polarized cycles. This "gutting" leaves much of the burden on localized and often underfunded legal challenges.

Is the AI Job Apocalypse Overhyped?

Amidst these institutional shifts, a pervasive anxiety remains regarding the long-term impact of generative AI on the global workforce. Recent waves of layoffs at Meta and other prominent tech firms have reignited the debate over whether we are witnessing an "AI job apocalypse."

While some view these cuts as a sign of imminent replacement by automation, the reality may be more rooted in traditional corporate restructuring and economic volatility. The discourse often ignores how technology is currently being integrated into professional workflows. To understand the industry, one must consider several conflicting factors:

- Economic Restructuring: Many recent layoffs are driven by post-pandemic corrections rather than direct AI intervention.

- Task Augmentation: Current AI models are primarily automating specific, repetitive tasks within larger roles.

- The Training Paradox: While some jobs are disappearing, new roles are emerging in the management and fine-tuning of large language models.

- Displacement Risks: Certain sectors, particularly data labeling and entry-level content creation, face much higher levels of direct vulnerability.

The "apocalypse" narrative may be overhyped, yet it is not without merit. While a total replacement of human intellect remains a distant prospect, the displacement of specific job functions is already an observable reality. The true challenge will be the rapid evolution of what work requires from the human workforce.